And a useful thread:

Patakula, Balaji 11:44a

Hello Phil

Me 11:44a

Hiya

Patakula, Balaji 11:45a

the url should be localhost:8870/nlpservice/ner

Patakula, Balaji 11:45a

with no double slash after the port

Me 11:46a

localhost:8870/nlpservice/ner gives the same error in my setup

Patakula, Balaji 11:46a

also the body should be like { "text": "my name is Phil Feldman."}

Patakula, Balaji 11:46a

or any text that u want

Me 11:47a

So it's not the JSON object on the NLPService page?

Patakula, Balaji 11:48a

if u import the nlp.json into the postman

Patakula, Balaji 11:48a

all the requests will be already there

Me 11:49a

Import how?

Patakula, Balaji 11:49a

there is an import menu on postman

Me 11:50a

Looking for it...

Patakula, Balaji 11:50a

the middle panel on the top black menu last item

Me 11:50a

Got it.

Patakula, Balaji 11:51a

just import that json downloaded from the wiki

Patakula, Balaji 11:51a

and u should have the collection now in ostman

Patakula, Balaji 11:51a

postman

Patakula, Balaji 11:51a

and u can just click to send

Me 11:52a

Added the file. Now what.

Patakula, Balaji 11:52a

can u share the screen

Me 11:52a

using what?

Patakula, Balaji 11:52a

u have the nlp service running?

Patakula, Balaji 11:53a

just in IM

Patakula, Balaji 11:53a

there is a present screen on the bottom of this chat

Me 11:53a

nlp service is running. It extracted my entity as well. Now I'm curious about that bigger json file

Patakula, Balaji 11:54a

that json file is just the REST calls that are supported by the service

Patakula, Balaji 11:54a

it is just a way of documenting the REST

Patakula, Balaji 11:54a

so some one can just import the file and execute the commands

Me 11:54a

So how does it get ingested?

Patakula, Balaji 11:55a

which one?

Me 11:55a

nlp.json

Patakula, Balaji 11:55a

its not ingested. NLP is service. It gets the requests through Rabbit queue from the Crawler ( another service)

Patakula, Balaji 11:56a

if u need to test the functionality of NLP, the way u can test and see the results is using the REST interface that we are doing now

Me 11:56a

so nlp.json is a configuration file for postman?

Patakula, Balaji 11:56a

thats right

Me 11:57a

Ah. Not obvious.

Patakula, Balaji 11:57a

Is Aaron sit next to you?

Me 11:58a

No, he stepped out for a moment. He should be back in 30 minutes or so.

Patakula, Balaji 11:58a

may be u can get the data flow from him and he knows how to work with all these tools

Me 11:58a

Yeah, he introduced me to Postman.

Me 11:58a

But he thought nlp.json was something to send to the NLPService.

Patakula, Balaji 11:58a

may be he can give a brain dump of the stuff and how services interact, how data flows etc.,

Me 11:59a

I'm starting to see how it works. Was not expecting to see Erlang.

Me 12:00p

Can RabbitMQ coordinate services under development on my machine with services stood up on a test environment, such as AWS?

Patakula, Balaji 12:01p

u can document all the REST calls that a service exposes by hand writing all those ...or just export the REST calls from postman and every one who wants to use the service can just import that json and work with the REST interface

Me 12:01p

Got it.

Patakula, Balaji 12:01p

RabbitMq is written in Erlang and we interface with it for messaging

Patakula, Balaji 12:02p

yes, u can configure the routes to work that way

Patakula, Balaji 12:02p

meaning mismatch services between different environments

Me 12:02p

Yeah, I see that. Not that surprising that a communications manager would be written in Erlang. But still a rare thing to see.

Me 12:03p

Is there a collection of services stood up that way for development?

Patakula, Balaji 12:04p

u installed rabbit yesterday locally on ur machine

Me 12:04p

Yes, otherwise none of this would be working?

Patakula, Balaji 12:04p

so u can run various services now orchestrated through ur local rabbit

Me 12:05p

Understood. Are there currently stood-up services that can be accessed on an ad-hoc basis, or would I need to do that?

Patakula, Balaji 12:05p

Rabbit is only for streaming messages. Every service exposes both streaming ( Rabbitmq messages) and REST interfaces

Patakula, Balaji 12:06p

there are no services stood up in adhoc env currently. There is a CI ,QA and Demo env

Patakula, Balaji 12:06p

all those envs have all the services running

Me 12:07p

What's Cl?

Me 12:07p

I'd guess continuous integration, but it's ambiguous.

Patakula, Balaji 12:07p

continuous integration. Every code checkin automatically builds the system, runs the tests, creates docker images and deploys those services and starts them

Me 12:08p

Can these CI services be pinged directly?

Patakula, Balaji 12:08p

ye

Patakula, Balaji 12:08p

yes

Me 12:09p

Do you need to be on the VPN?

Patakula, Balaji 12:09p

http://dockerapps.philfeldman.com:8763/ <http://dockerapps.philfeldman.com:8763/>

Patakula, Balaji 12:09p

those are the services running

Patakula, Balaji 12:09p

and dockerapps is the host machine for CI

Me 12:09p

And how do I access the NLPService on dockerapps?

Patakula, Balaji 12:10p

access meaning? u want t send the REST requests to CI service?

Me 12:10p

Yeah. Bad form?

Patakula, Balaji 12:11p

just in the REST, change the localhost to dockerapps.philfeldman.com

Me 12:12p

I get a 'Could not get any response'

Me 12:12p

dockerapps.philfeldman.com:8870/nlpservice/ner

Patakula, Balaji 12:12p

sorry, NLP is running on a different host 10.18.7.177

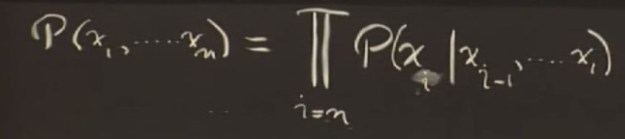

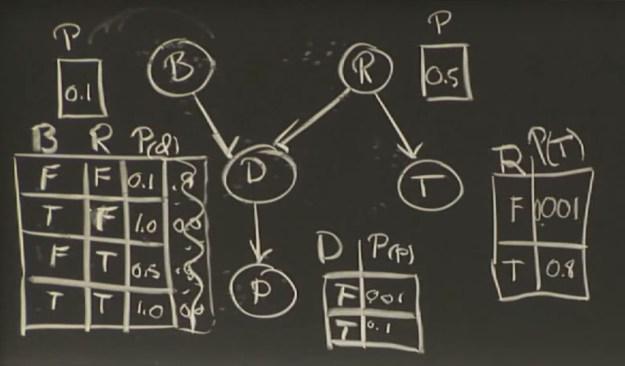

If this were a joint probability table there would be 2^5 (32) as opposed to the number here, which is 10.

If this were a joint probability table there would be 2^5 (32) as opposed to the number here, which is 10. I could see that the tempHi and tempLo items are being mapped as Objects. Typing

I could see that the tempHi and tempLo items are being mapped as Objects. Typing

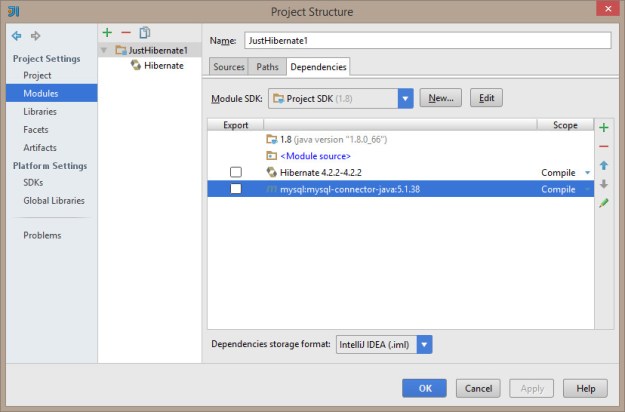

And here’s the library structure for the mysql driver. Note that it’s actually pointing at my m2 repo…

And here’s the library structure for the mysql driver. Note that it’s actually pointing at my m2 repo…

You must be logged in to post a comment.