Cool bike accident map: rjfische.carto.com/viz/6ba7f7e8-3905-4d28-9596-2cc2d4d40b8b/embed_map

New NN?

Keeping all the cats aligned for the reading and defense

Keeping all the cats aligned for the reading and defense

Cool bike accident map: rjfische.carto.com/viz/6ba7f7e8-3905-4d28-9596-2cc2d4d40b8b/embed_map

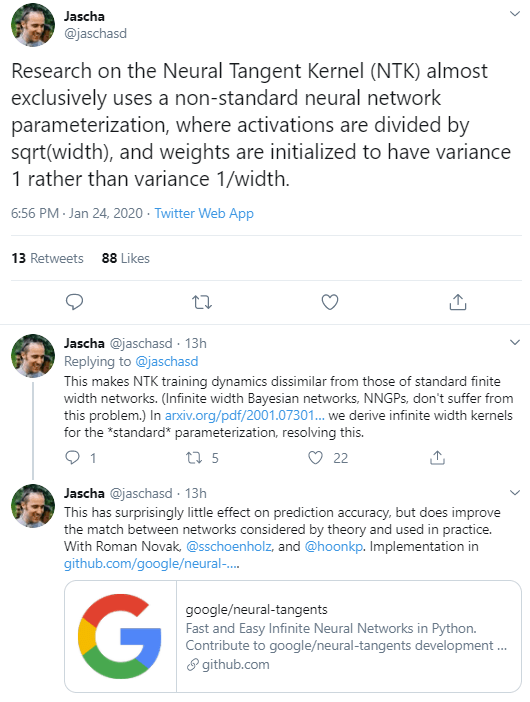

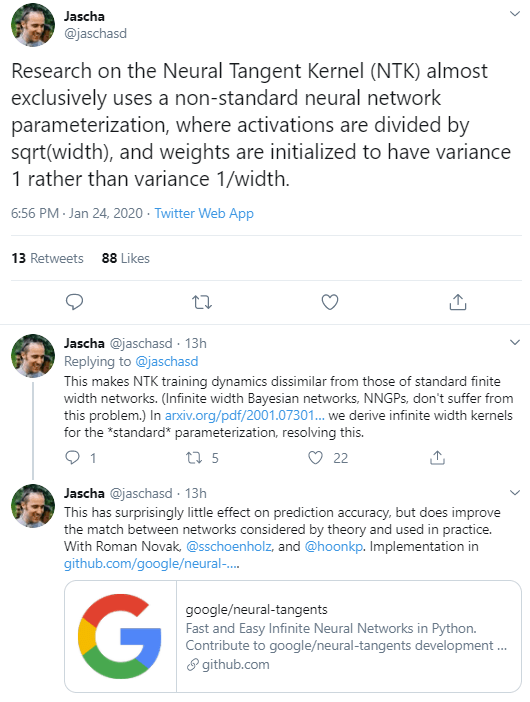

New NN?

Keeping all the cats aligned for the reading and defense

Keeping all the cats aligned for the reading and defense

Noam Chomsky: Language, Cognition, and Deep Learning | Artificial Intelligence (AI) Podcast

Stuart Russell, in Human Compatible, makes the case that we need to make AI that uses some form of inverse reinforcement learning, to figure out what we as humans want. I’m not opposed to that, but I think I want in addition, a system, that exercises AI and ML systems looking for runaway conditions. If an AI system can be falsified in this way, it is, by definition, dangerous.

Tikkun olam (Hebrew for “world repair”) has come to connote social action and the pursuit of social justice. The phrase has origins in classical rabbinic literature and in Lurianic kabbalah, a major strand of Jewish mysticism originating with the work of the 16th-century kabbalist Isaac Luria.

Spend the last four days riding a fixee around the Eastern Shore of Maryland

This is pretty cool – A linear algebra textbook using TF 2.0

This looks really good: An Interactive Introduction to Fourier Transforms

7:00 – ASRC BD

9:00 – 1:30 ASRC MKT

7:00 – 3:30 ASRC MKT

(pp 220)

(pp 220)4:00 – 5:00 Meeting with Aaron M. to discuss Academic RB wishlist.

7:00 – 4:00 ASRC MKT

7:00 – 5:00 VTX

Develop a ‘training’ corpus known bad actors (KBA) for each domain.

CREATE VIEW view_ratings AS

select io.link, qo.search_type, po.first_name, po.last_name, po.pp_state, ro.person_characterization from item_object io

INNER JOIN query_object qo ON io.query_id = qo.id

INNER JOIN rating_object ro on io.id = ro.result_id

INNER JOIN poi_object po on qo.provider_id = po.id;

7:00 – 3:30 VTX

source: {

gdocId: '0Ai6LdDWgaqgNdG1WX29BanYzRHU4VHpDUTNPX3JLaUE',

tables: "Presidents"

}

That gave me a hint of what to look for in the document source of the demo, where I found this:

var urlBase = 'https://ca480fa8cd553f048c65766cc0d0f07f93f6fe2f.googledrive.com/host/0By6LdDWgaqgNfmpDajZMdHMtU3FWTEkzZW9LTndWdFg0Qk9MNzd0ZW9mcjA4aUJlV0p1Zk0/CHI2016/';

And that’s the link from above.

7:00 – 4:00 VTX

7:00 – 6:00 Leave

You must be logged in to post a comment.