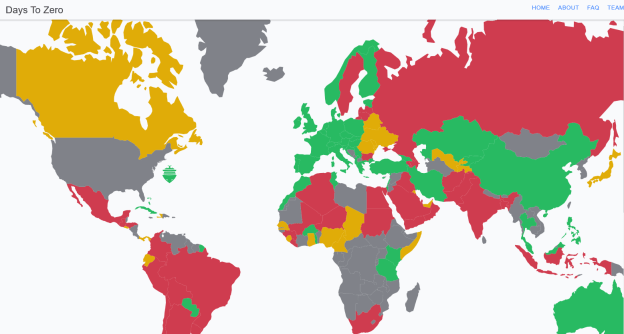

Race/police riots. We’re not even halfway through the year

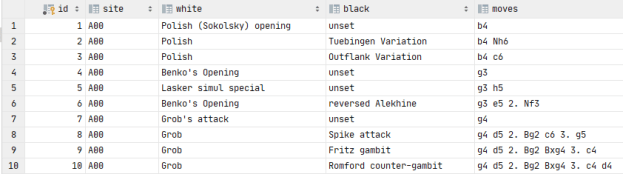

GPT-2 Agents

- Wrote my first pgn code! It moves the rooks out, down to the other side of the board and then back

1. h4 h5 2. Rh3 Rh6 3. Ra3 Ra6 4. Rh3 Rh6 5. 1/2-1/2

- And it’s working! I did have to adjust the subtraction order to get pieces to move in the right direction. It even generates game text:

Game.parse_moves(): Move1 = ' h4 h5 ' Evaluating move [h4 h5] piece string = '' (blank is pawn) piece string = '' (blank is pawn) Game.parse_moves(): Move2 = ' Rh3 Rh6 ' Evaluating move [Rh3 Rh6] piece string = 'R' (blank is pawn) piece string = 'R' (blank is pawn) Game.parse_moves(): Move3 = ' Ra3 Ra6 ' Evaluating move [Ra3 Ra6] piece string = 'R' (blank is pawn) Chessboard.check_if_clear() white rook is blocked by white pawn piece string = 'R' (blank is pawn) Chessboard.check_if_clear() black rook is blocked by black pawn Game.parse_moves(): Move4 = ' Rh3 Rh6 ' Evaluating move [Rh3 Rh6] piece string = 'R' (blank is pawn) piece string = 'R' (blank is pawn) Game.parse_moves(): Move5 = ' 1/2-1/2' Evaluating move [1/2-1/2] The game begins as white uses the Polish (Sokolsky) opening opening. Aye Bee moves white pawn from h2 to h4. Black moves pawn from h7 to h5. White moves rook from h1 to h3. Cee Dee moves black rook from h8 to h6. White moves rook from h3 to a3. Black moves rook from h6 to a6. Aye Bee moves white rook from a3 to h3. Black moves rook from a6 to h6. In move 5, Aye Bee declares a draw. Cee Dee declares a draw

- Ok, it’s not quite working. I can’t take pieces any more. Fixed. Here’s the longer sequence where the white rook takes the black rook and retreats back to its start, moving past the other white rook:

1. h4 h5 2. Rh3 Rh6 3. Ra3 Ra6 4. Rxa6 c6 5. Ra3 d6 6. Rh3 e6 7. Rh1 f6 8. 1/2-1/2

- And here’s the expanded game:

The game begins as white uses the Polish (Sokolsky) opening opening. White moves pawn from h2 to h4. Black moves pawn from h7 to h5. Aye Bee moves white rook from h1 to h3. Black moves rook from h8 to h6. In move 3, White moves rook from h3 to a3. Black moves rook from h6 to a6. White moves rook from a3 to a6. White takes black rook. Black moves pawn from c7 to c6. In move 5, White moves rook from a6 to a3. Black moves pawn from d7 to d6. White moves rook from a3 to h3. Black moves pawn from e7 to e6. In move 7, White moves rook from h3 to h1. Black moves pawn from f7 to f6. In move 8, Aye Bee declares a draw. Cee Dee declares a draw

- It’s a little too Friday to try a full run and find new bugs though. Going to work on the paper instead

D20 – Ping Zach. Made contact. Asked for a date to have the maps up or cut bait.

GOES

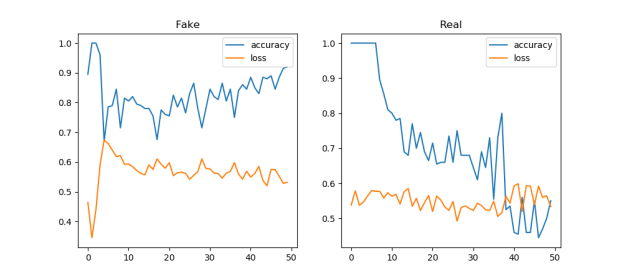

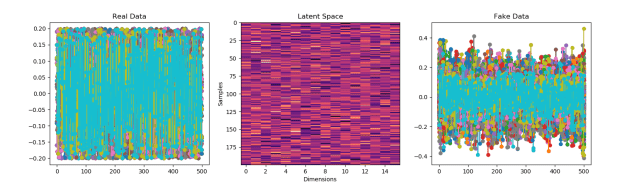

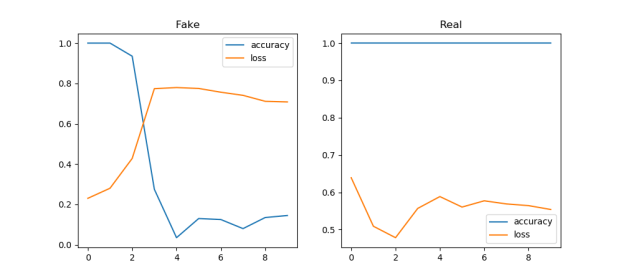

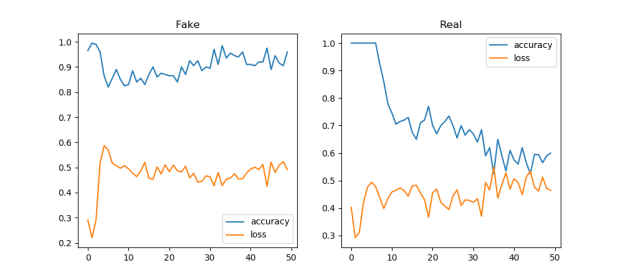

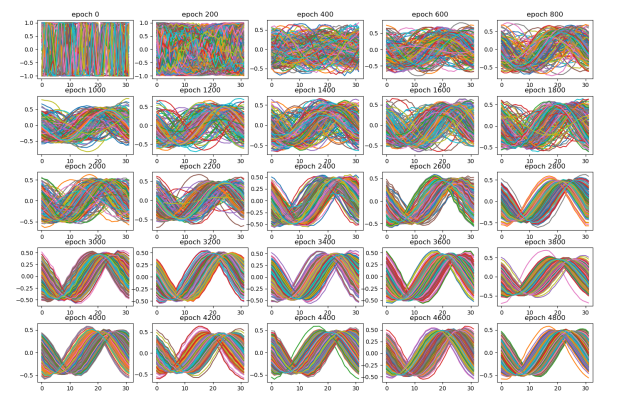

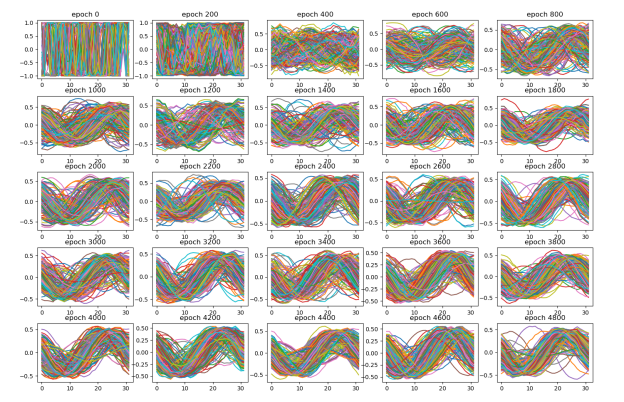

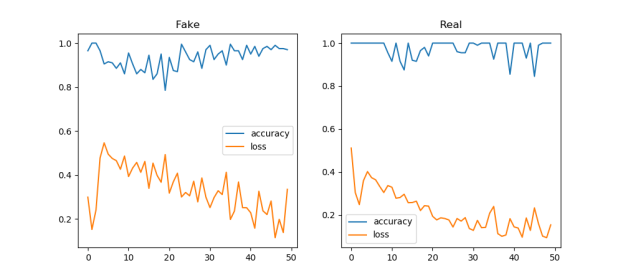

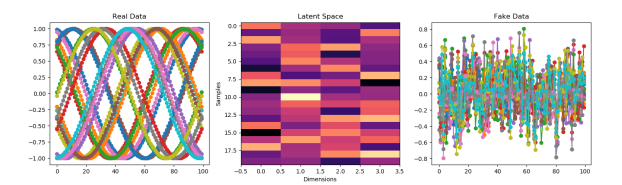

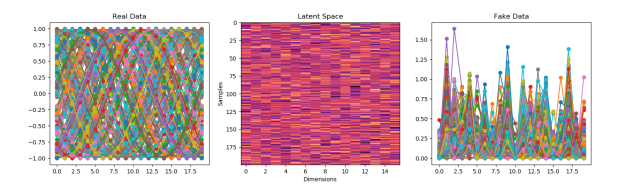

- Add accuracy/loss diagram and paragraph – done

- Finish first pass

- Nope, All hands plus 90 minutes of required cybersecurity training. To be fair, the videos were nicely done, with a light touch and good acting.

You must be logged in to post a comment.