I wound up using Python because of machine learning, in particular TensorFlow and Keras. And Python is ok. I miss strong typing, but all in all it seems pretty solid with some outstanding science libraries. Mostly, I write tools:

I’ve been using IntelliJ Ultimate for my Python work. I came at it from Java and the steaming wreckage that is Eclipse. I like Ultimate a lot. It updates frequently but no too much. Most of the time it’s seamless. And I can switch between C++, Java, Python, and TypeScript/JavaScript.

For a new tool project, I need to have Python analytics served to a thin client running D3. Which meant that it was time to set up a client-server architecture.

These things are always a pain to set up. A “Hello world” app that sends JSON from the server to the client where it is rendered at interactive rates, with user actions being fed back to the server is still a surprisingly clunky thing to set up. So I was expecting some grumbling. I was not expecting everything to be broken. Well, nearly everything.

It is possible to create a Flask project in IntelliJ Ultimate. But you can’t actually launch the server. The goal is to have code like this:

Set up and run a webserver with console output like this:

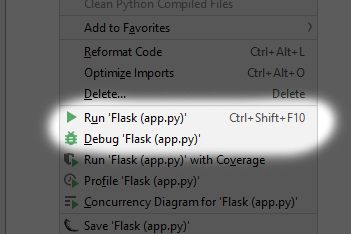

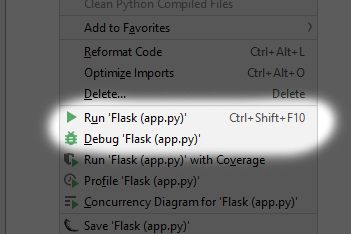

It turns out that there is an unresolved bug in IntelliJ that prevents the webserver from launching. In IntelliJ, the way you know that everything is working is that the python file – app.py in this case — has parentheses around it (app.py). You can also see this in the launch menu:

If you see those parens, then IntelliJ knows that the file contains a Flask webserver, and will run things accordingly.

So how did I get this to work? I bought the Professional Edition of PyCharm (which has Flask), and used the defaults to create the project. Note that it appears that you have to use the Jinja2 template language. The other option, Mako, fails. I have not tried the None option. It’s been that sort of day.

By the way, if you already have a subscription to Ultimate, you can extend it to include the whole suite for the low low price of about $40/year.

This implies that the idea that a set of diverse ML systems all agreeing is a warning condition is worth exploring.

This implies that the idea that a set of diverse ML systems all agreeing is a warning condition is worth exploring.

You must be logged in to post a comment.