7:00 – 4:00 ASRC IRAD

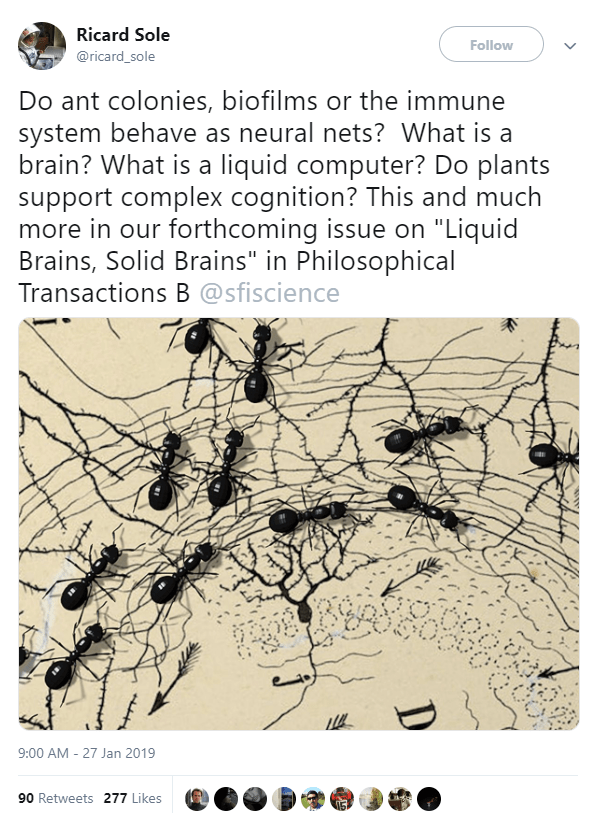

- Good example of combining evolution and NNs (Maybe by Johan Stymne (né Eliasson):

Teaching a neural network to drive a car. It’s a simple network with a fixed number of hidden nodes (no NEAT), and no bias. Yet it manages to drive the cars fast and safe after just a few generations. Population is 650. The network evolves through random mutation (no cross-breeding). Fitness evaluation is currently done manually as explained in the video.

- This interactive balance between evolution and learning is exactly the sort of interaction that I think should be at the core of the research browser. The only addition is the ability to support groups collaboratively interacting with the information so that multiple analysts can train the system.

- A quick thing on the power of belief spaces from a book review about, of all things, Hell. One of the things that gives dimension to a belief space is the fact that people show up.

- Soon, he’d left their church and started one of his own, where he proclaimed his lenient gospel, pouring out pity and anger for those Christians whose so-called God was a petty torturer, until his little congregation petered out. Assured salvation couldn’t keep people in pews, it turned out. The whole episode, in its intensity and its focus on the stakes of textual interpretation, was reminiscent of Lucas Hnath’s recent play “The Christians,” about a pastor who comes out against Hell and sparks not relief but an exegetical nightmare.

- Web Privacy Measurement in Real-Time Bidding Systems. A Graph-Based Approach to Rtb System Classification.

- In the doctoral thesis, Robbert J. van Eijk investigates the advertisements online that seem to follow you. The technology enabling the advertisements is called Real-Time Bidding (RTB). An RTB system is defined as a network of partners enabling big data applications within the organizational field of marketing. The system aims to improve sales by real-time data-driven marketing and personalized (behavioral) advertising. The author applies network science algorithms to arrive at measuring the privacy component of RTB. In the thesis, it is shown that cluster-edge betweenness and node betweenness support us in understanding the partnerships of the ad-technology companies. From our research it transpires that the interconnection between partners in an RTB network is caused by the data flows of the companies themselves due to their specializations in ad technology. Furthermore, the author provides that a Graph-Based Methodological Approach (GBMA) controls the situation of differences in consent implementations in European countries. The GBMA is tested on a dataset of national and regional European news websites.

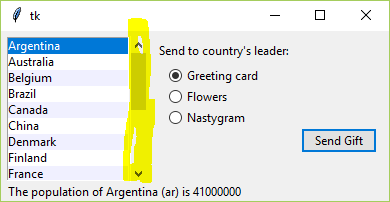

- Continuing with Tkinter and ttk

- That was easy!

- And now there is a scrollbar, which is a little odd to add. They are separate components that you have to explicitly link and place in the same ttk.Frame:

# make the frame for the listbox and the scroller to live in self.lbox_frame = ttk.Frame(self.content_frame) # place the frame self.lbox_frame.grid(column=0, row=0, rowspan=6, sticky=(N,W,E,S)) # create the listbox and the scrollbar self.lbox = Listbox(self.lbox_frame, listvariable=self.cnames, height=5) lbox_scrollbar = ttk.Scrollbar(self.lbox_frame, orient=VERTICAL, command=self.lbox.yview) # after both components have been made, have the lbox point at the scroller self.lbox['yscrollcommand'] = lbox_scrollbar.set

- If you get this wrong, then you can end up with a scrollbar in some other Frame, connected to your target. Here’s what happens if the parent is root:

- And here is where it’s in the lbox frame as in the code example above:

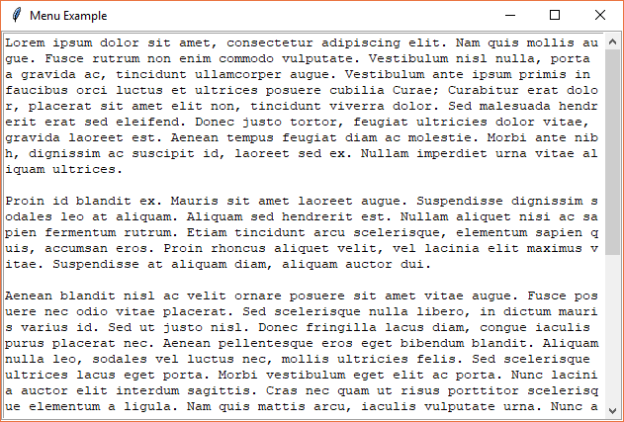

- The fully formed examples are no more. Putting together a menu app with text. Got the text running with a scrollbar, and everything makes sense. Next is the menus…

- Here’s the version of the app with working menus:

- That was easy!

- For seminar: Predictive Analysis by Leveraging Temporal User Behavior and User Embeddings

- The rapid growth of mobile devices has resulted in the generation of a large number of user behavior logs that contain latent intentions and user interests. However, exploiting such data in real-world applications is still difficult for service providers due to the complexities of user behavior over a sheer number of possible actions that can vary according to time. In this work, a time-aware RNN model, TRNN, is proposed for predictive analysis from user behavior data. First, our approach predicts next user action more accurately than the baselines including the n-gram models as well as two recently introduced time-aware RNN approaches. Second, we use TRNN to learn user embeddings from sequences of user actions and show that overall the TRNN embeddings outperform conventional RNN embeddings. Similar to how word embeddings benefit a wide range of task in natural language processing, the learned user embeddings are general and could be used in a variety of tasks in the digital marketing area. This claim is supported empirically by evaluating their utility in user conversion prediction, and preferred application prediction. According to the evaluation results, TRNN embeddings perform better than the baselines including Bag of Words (BoW), TFIDF and Doc2Vec. We believe that TRNN embeddings provide an effective representation for solving practical tasks such as recommendation, user segmentation and predictive analysis of business metrics.

You must be logged in to post a comment.