Here we are, one more trip around the sun

How Do Vision Transformers Work?

- The success of multi-head self-attentions (MSAs) for computer vision is now indisputable. However, little is known about how MSAs work. We present fundamental explanations to help better understand the nature of MSAs. In particular, we demonstrate the following properties of MSAs and Vision Transformers (ViTs): (1) MSAs improve not only accuracy but also generalization by flattening the loss landscapes. Such improvement is primarily attributable to their data specificity, not long-range dependency. On the other hand, ViTs suffer from non-convex losses. Large datasets and loss landscape smoothing methods alleviate this problem; (2) MSAs and Convs exhibit opposite behaviors. For example, MSAs are low-pass filters, but Convs are high-pass filters. Therefore, MSAs and Convs are complementary; (3) Multi-stage neural networks behave like a series connection of small individual models. In addition, MSAs at the end of a stage play a key role in prediction. Based on these insights, we propose AlterNet, a model in which Conv blocks at the end of a stage are replaced with MSA blocks. AlterNet outperforms CNNs not only in large data regimes but also in small data regimes. The code is available at this https URL.

SBIRs

- It was a very busy day yesterday. Early morning meeting before the actual meeting, then lots of discussion on how (basically) to fit a simulation into a TLM. Then a long discussion with Dave. Then a short lunch break where I got to go for a walk in the February cold. Then demos, then another meeting with Dave.

Then about 45 minutes to spin down before

Waikato

- Where we went over Tamahau’s progress, which is good.

Ended the day watching Mythbusters encasing Adam Savage in Bubble Wrap.

So, for today…

SBIRs

- Responded to Dave’s email about tokenization and overall project approach. Talked about PGN as an example of simulator tokenizing. No meetings on the calendar, so I’m not sure what happens next.

- Put together possible stories for next sprint

Book

- If today turns out to be a light day, I’m going to start roughing out the social dominance chapter

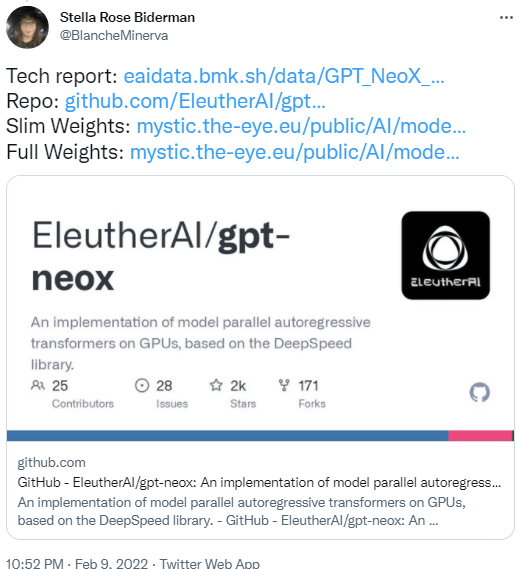

GPT-Agents

- Need to put together a landscape for today’s meeting. Actually go caught up in just getting the results from one prompt “Here’s a short list of racist terms in wide use today. Some may surprise you:”. Not really even close to saturation and I have pages

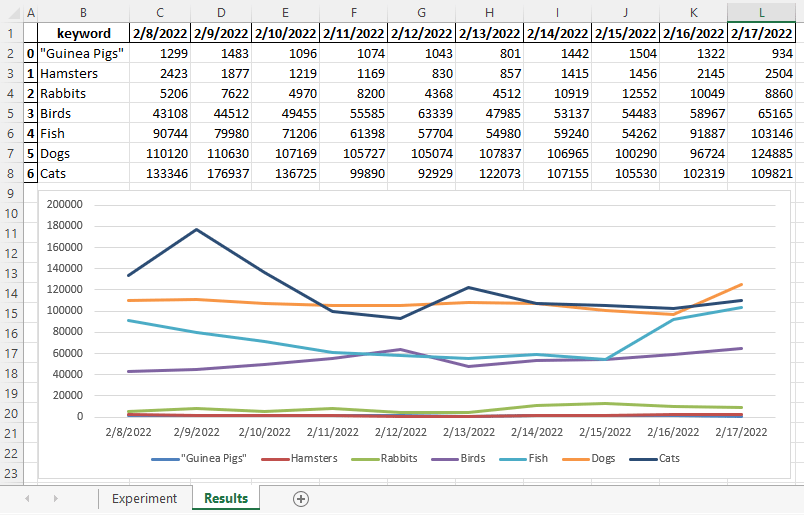

- 3:30 Meeting. Fun! I think we’re going to look at food keyword generation because it’s less horrible than all the racist terms the GPT can come up with

You must be logged in to post a comment.