Ride through the park today and ask about pavilion rental – done

GPT-2 Agents

- Rewrite intro, including the finding that these texts seem to be matched in some way. Done

- Uploaded the new version to ArXiv. Should be live by tomorrow

- Read Language Models as Knowledge Bases, and added to the lit review.

- Discovered Antoine Bosselut, who was lead author on the following papers.Need to add them to the future work section

- Dynamic Knowledge Graph Construction for Zero-shot Commonsense Question Answering

- Understanding narratives requires dynamically reasoning about the implicit causes, effects, and states of the situations described in text, which in turn requires understanding rich background knowledge about how the social and physical world works. At the core of this challenge is how to access contextually relevant knowledge on demand and reason over it.

In this paper, we present initial studies toward zero-shot commonsense QA by formulating the task as probabilistic inference over dynamically generated commonsense knowledge graphs. In contrast to previous studies for knowledge integration that rely on retrieval of existing knowledge from static knowledge graphs, our study requires commonsense knowledge integration where contextually relevant knowledge is often not present in existing knowledge bases. Therefore, we present a novel approach that generates contextually relevant knowledge on demand using generative neural commonsense knowledge models.

Empirical results on the SocialIQa and StoryCommonsense datasets in a zero-shot setting demonstrate that using commonsense knowledge models to dynamically construct and reason over knowledge graphs achieves performance boosts over pre-trained language models and using knowledge models to directly evaluate answers.

- Understanding narratives requires dynamically reasoning about the implicit causes, effects, and states of the situations described in text, which in turn requires understanding rich background knowledge about how the social and physical world works. At the core of this challenge is how to access contextually relevant knowledge on demand and reason over it.

- COMET: Commonsense Transformers for Automatic Knowledge Graph Construction

- We present the first comprehensive study on automatic knowledge base construction for two prevalent commonsense knowledge graphs: ATOMIC (Sap et al., 2019) and ConceptNet (Speer et al., 2017). Contrary to many conventional KBs that store knowledge with canonical templates, commonsense KBs only store loosely structured open-text descriptions of knowledge. We posit that an important step toward automatic commonsense completion is the development of generative models of commonsense knowledge, and propose COMmonsEnse Transformers (COMET) that learn to generate rich and diverse commonsense descriptions in natural language. Despite the challenges of commonsense modeling, our investigation reveals promising results when implicit knowledge from deep pre-trained language models is transferred to generate explicit knowledge in commonsense knowledge graphs. Empirical results demonstrate that COMET is able to generate novel knowledge that humans rate as high quality, with up to 77.5% (ATOMIC) and 91.7% (ConceptNet) precision at top 1, which approaches human performance for these resources. Our findings suggest that using generative commonsense models for automatic commonsense KB completion could soon be a plausible alternative to extractive methods.

- Dynamic Knowledge Graph Construction for Zero-shot Commonsense Question Answering

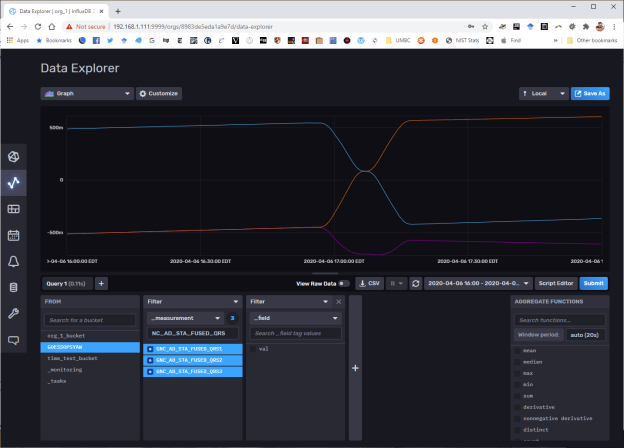

GOES

- 10:00 sim status meeting – planning to fully evaluate off-axis rotation by Monday, then characterize Rwheel contribution, adjust the control system and start commanding vehicle rotations by the end of the week? Seems ambitions, but what the hell.

- 2:00 status meeting

- Anything about GVSETS? Yup: Meeting Wed 9/16/2020 9:00 AM – 10:00 AM

JuryRoom

- 5:30 meeting. Discuss proposal and additional meetings

Book

- Transfer more content

You must be logged in to post a comment.