Get tix for ET 2020

7:00 – 4:30 PhD

- Starting slides as a way to do the chapter overviews and summaries

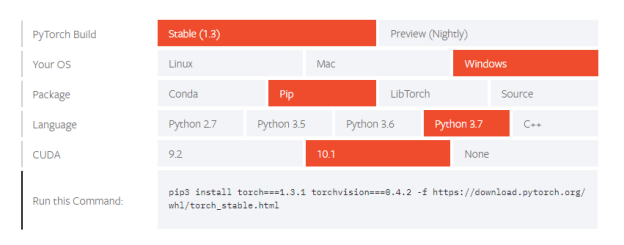

- GPT-2 agents

- Got rid of Huggingface’s transformers library. Too much hidden stuff to understand

- Aaron found a couple of other projects on GitHub – trying those

- Downloaded the 715M model and associated files

You must be logged in to post a comment.