Rubrix is a production-ready Python framework for exploring, annotating, and managing data in NLP projects.

Getting started with 3D content for synthetic data (Unity)

More reviews

SBIRs

- 9:15 Standup. Not sure what to talk about here given the new schedule crazyness

- It also occurs to me that since I’ll be adapting my academic research code to produce the demo, there’s no IP for anyone being developed for this effort.

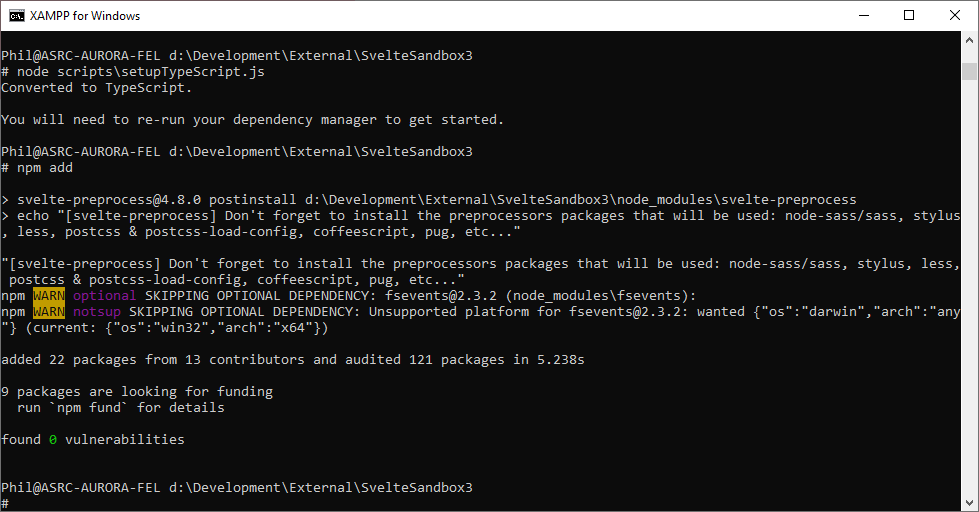

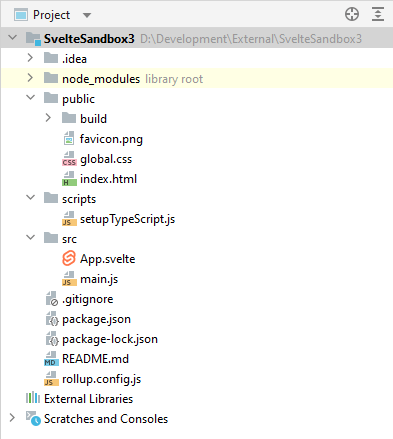

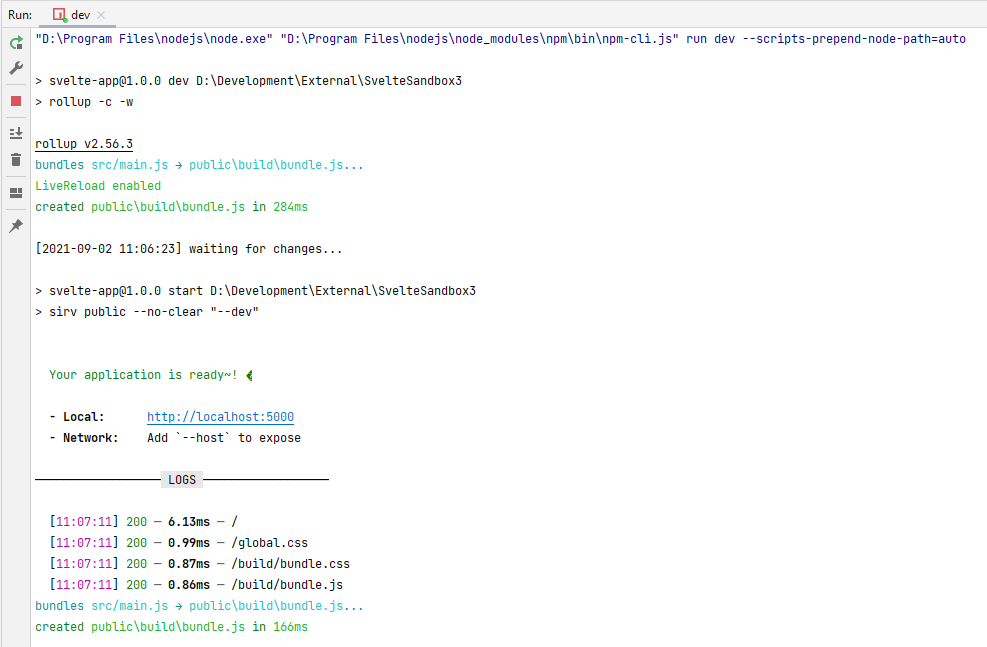

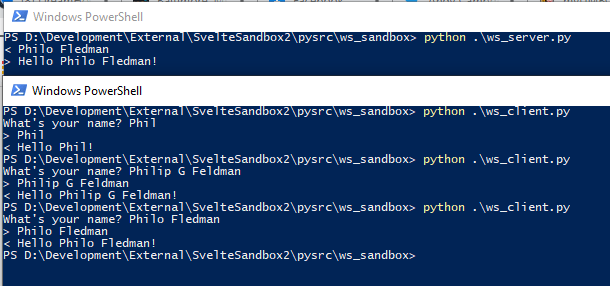

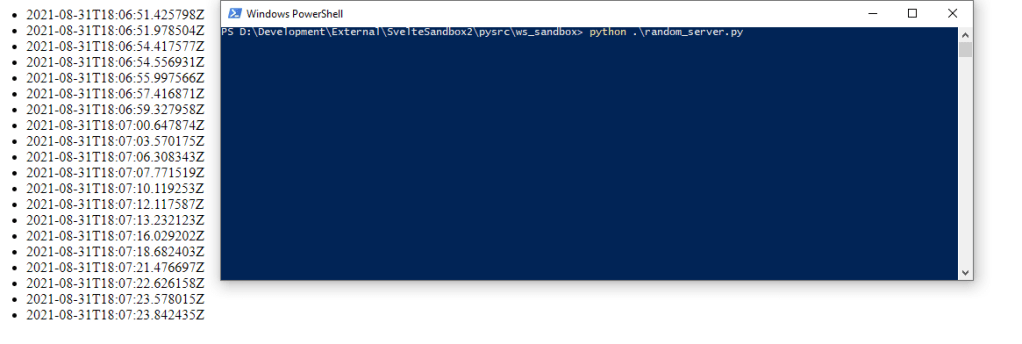

- More poking at Svelte with Zach? Some progress. Still can’t get to switch pages

- 11:00 Kickoff meeting – looks like we have a bit more time

- 2:00 Adversarial reinforcement tagup

GPT Agents

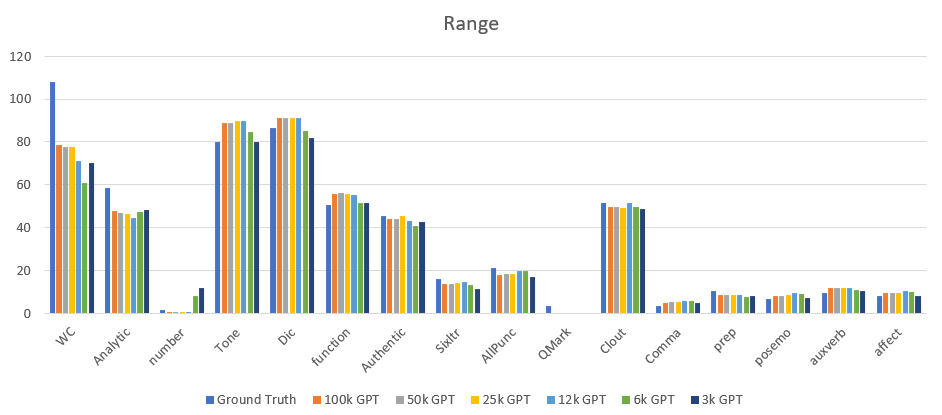

- Need to generate new tweets from the chinavirus, covid, and sars-cov-2 models using the prompt ‘[[[‘ as a baseline to compare with the ground truth – done!

- Need to sample ground truth and put it in the gpt_experiments tables

You must be logged in to post a comment.