“Before we say “explainable AI” we must decide WHAT is it that we wish to explain. Are we about to explain the function that the system fitted to the data? or are we about to explain the world behind the data? Science writers seem unaware of the difference.” – Judea Pearl

Stanford CS234: Reinforcement Learning | Winter 2019 | Lecture 1 – Introduction

- From the syllabus: To realize the dreams and impact of AI requires autonomous systems that learn to make good decisions. Reinforcement learning is one powerful paradigm for doing so, and it is relevant to an enormous range of tasks, including robotics, game playing, consumer modeling and healthcare. This class will provide a solid introduction to the field of reinforcement learning and students will learn about the core challenges and approaches, including generalization and exploration. Through a combination of lectures, and written and coding assignments, students will become well versed in key ideas and techniques for RL. Assignments will include the basics of reinforcement learning as well as deep reinforcement learning — an extremely promising new area that combines deep learning techniques with reinforcement learning.

GPT-Agents

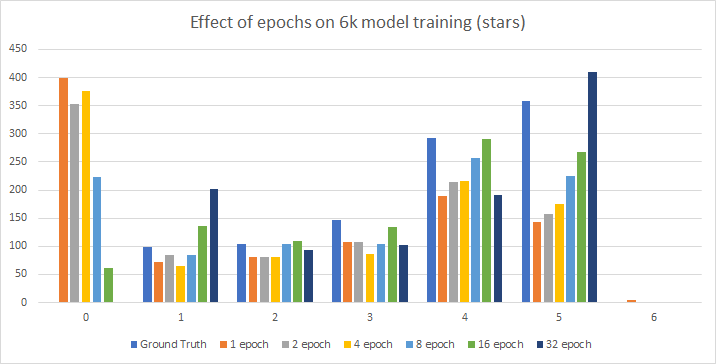

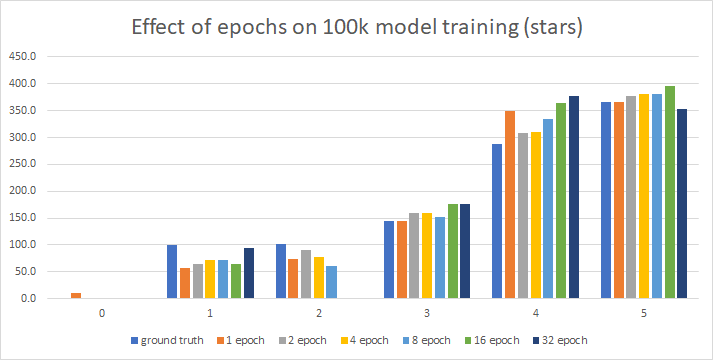

- Generate synthesized data – running

- Calculate sentiment

- Create spreadsheets (make a new directory for review-stars)

SBIR(s)

- 9:15 standup – done

- 10:30 NASA meeting – done Write up a 3 page version that describes a minimum viable project and then future work that extends the MVP

- Ping Zach for a meeting to set up project – done

- Start framing out paper on Overleaf – done

- EXPENSE REPORT – this is chewing up hours. I STILL don’t have a code that works

JuryRoom

- Write some rants for Tamahau

You must be logged in to post a comment.