One year ago things were pretty crazy here

GPT Agents

- From On the Reliability and Validity of Detecting Approval of Political Actors in Tweets (Section 4.2) as an example of keyword SOTA :

- We evaluate OTS and custom methods on the following datasets. While some of these datasets have common targets, for example, Trump is present in four of them, they are all collected in different periods of time, with different keywords (c.f Appendix B). All datasets have stance labels of ‘favor’, ‘against’, and ‘none’ towards the targets. (EMNLP)

- Finished with generating the new data, now we get to see if it works!

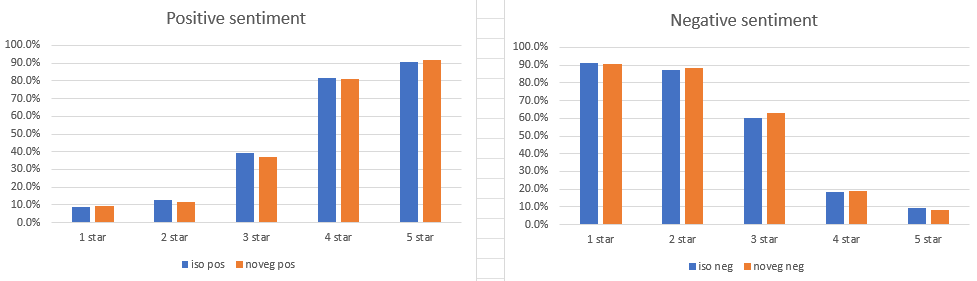

- It’s pretty good. Here’s the two GPT models, one trained on the first 50k reviews of the American dataset (iso) and the other trained on the first 50k of the American dataset that do not contain the string “vegetarian options”. The probes are:

- no vegetarian options

- some vegetarian options

- several vegetarian options

- many vegetarian options

- Basically identical

- Now I need to compare the response vs the ground truth for each of the probes

You must be logged in to post a comment.