7:00 – 4:30 VTX

- Took the weekend off for the ESCN. Bailed on Saturday because of rain, then dodged rain for two days, then got a nice ride in on Tuesday.

- Chatting with Aaron last night, I discovered that the REST API won’t work for Demo. I’ll need to get a new SQL dump from Heath. No, actually it works just fine in that it is accessable, but anything other than empty sets is a timeout.

- Need to try to build a new jar file for the CorpusManager so it can have its own executable. Put the Manifest in the CM directory? not sure how to do that.

- Yay stack overflow!

- Writing

- Looks like my old laptop finally bit the dust. Chromebook time?

- Working on the Corpus manager to pull in links in the config file. Done!

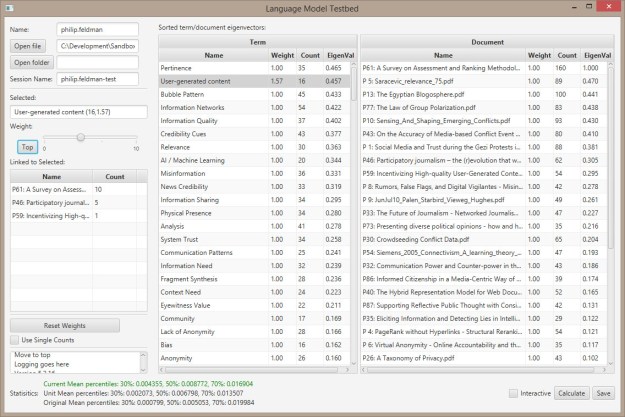

- So I’m having all kinds of problems getting the flag info from Jeremy’s rest service. I did realize that I can use the dashboard though and harvest the urls by following the links and build my list that way. Except that the flags are crap. Back to moby dick for the moment.

- Actually, those are pretty bad too. Margarita put three urls up on confluence.

- Got url scanning done through config file.

- Ingested the first four chapters of Moby Dick. Pretty interesting. Ill try those three files tomorrow and we’ll see what we’ve got, at least for a sense of .gov sites…

You must be logged in to post a comment.