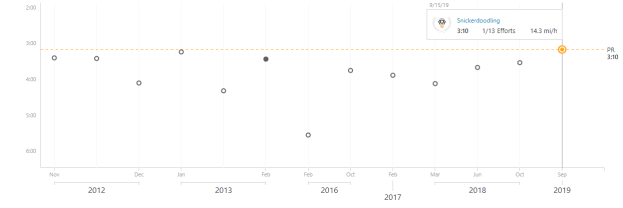

Done with the ride. Here are my stats:

Done with the ride. Here are my stats:

12th International Conference on Agents and Artificial Intelligence – Dammit, the papers are due October 4th. This would be a perfect venue for the GPT2 agents

Novelist Cormac McCarthy’s tips on how to write a great science paper

Unveiling the relation between herding and liquidity with trader lead-lag networks

7:00 – 5:00 ASRC GOES

ASRC AI Workshop 8:00 – 3:00

7:00 – 5:00 ASRC GOES

7:00 – 6:00 ASRC GOES

7:00 – 8:00 ASRC GOES

This makes me happy. Older, but not slower. Yet.

Document This document describes the Facebook Privacy-Protected URLs-light release, resulting from a collaboration between Facebook and Social Science One. It was originally prepared for Social Science One grantees and describes the dataset’s scope, structure, and fields.

As part of this project, we are pleased to announce that we are making data from the URLs service available to the broader academic community for projects concerning the effect of social media on elections and democracy. This unprecedented dataset consists of web page addresses (URLs) that have been shared on Facebook starting January 1, 2017 through to and including February 19, 2019. URLs are included if shared by more than on average 100 unique accounts with public privacy settings. Read the complete Request for Proposals for more information.

7:00 – 4:00 ASRC GOES

7:00 – 4:30 ASRC GOES

import tensorflow as tf from tensorflow import keras from tensorflow_core.python.keras import layers

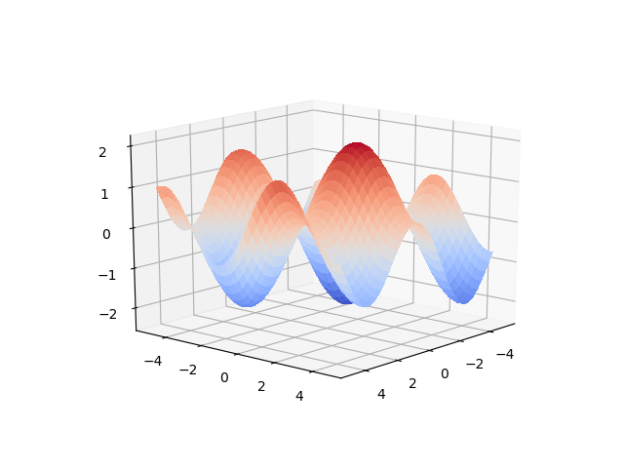

The first coding step was to generate the data. In this case I’m building a numpy matrix that has ten variations on math.sin(), using our timeseriesML utils code. There is a loop that sets up the code to create a new frequency, which is sent off to get back a pandas Dataframe that in this case has 10 sequence rows with 100 samples. First, we set the global sequence_length:

sequence_length = 100

then we create the function that will build and concatenate our numpy matrices:

def generate_train_test(num_functions, rows_per_function, noise=0.1) -> (np.ndarray, np.ndarray, np.ndarray):

ff = FF.float_functions(rows_per_function, 2*sequence_length)

npa = None

for i in range(num_functions):

mathstr = "math.sin(xx*{})".format(0.005*(i+1))

#mathstr = "math.sin(xx)"

df2 = ff.generateDataFrame(mathstr, noise=0.1)

npa2 = df2.to_numpy()

if npa is None:

npa = npa2

else:

ta = np.append(npa, npa2, axis=0)

npa = ta

split = np.hsplit(npa, 2)

return npa, split[0], split[1]

Now, we build the model. We’re using keras from the TF 2.0 RC0 build, so things look slightly different:

model = tf.keras.Sequential()

# Adds a densely-connected layer with 64 units to the model:

model.add(layers.Dense(sequence_length, activation='relu', input_shape=(sequence_length,)))

# Add another:

model.add(layers.Dense(200, activation='relu'))

# Add a softmax layer with 10 output units:

model.add(layers.Dense(sequence_length))

loss_func = tf.keras.losses.MeanSquaredError()

opt_func = tf.keras.optimizers.Adam(0.01)

model.compile(optimizer= opt_func,

loss=loss_func,

metrics=['accuracy'])

We can now fit the model to the generated data:

full_mat, train_mat, test_mat = generate_train_test(10, 10) model.fit(train_mat, test_mat, epochs=10, batch_size=2)

There is noise in the data, so the accuracy is not bang on, but the loss is nice. We can see this better in the plots above, which were created using this function:

def plot_mats(mat:np.ndarray, cluster_size:int, title:str, fig_num:int):

plt.figure(fig_num)

i = 0

for row in mat:

cstr = "C{}".format(int(i/cluster_size))

plt.plot(row, color=cstr)

i += 1

plt.title(title)

Which is called just before the program completes:

if show_plots:

plot_mats(full_mat, 10, "Full Data", 1)

plot_mats(train_mat, 10, "Input Vector", 2)

plot_mats(test_mat, 10, "Output Vector", 3)

plot_mats(predict_mat, 10, "Predict", 4)

plt.show()

import tensorflow as tf

from tensorflow import keras

from tensorflow_core.python.keras import layers

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

import timeseriesML.generators.float_functions as FF

sequence_length = 100

def generate_train_test(num_functions, rows_per_function, noise=0.1) -> (np.ndarray, np.ndarray, np.ndarray):

ff = FF.float_functions(rows_per_function, 2*sequence_length)

npa = None

for i in range(num_functions):

mathstr = "math.sin(xx*{})".format(0.005*(i+1))

#mathstr = "math.sin(xx)"

df2 = ff.generateDataFrame(mathstr, noise=0.1)

npa2 = df2.to_numpy()

if npa is None:

npa = npa2

else:

ta = np.append(npa, npa2, axis=0)

npa = ta

split = np.hsplit(npa, 2)

return npa, split[0], split[1]

def plot_mats(mat:np.ndarray, cluster_size:int, title:str, fig_num:int):

plt.figure(fig_num)

i = 0

for row in mat:

cstr = "C{}".format(int(i/cluster_size))

plt.plot(row, color=cstr)

i += 1

plt.title(title)

model = tf.keras.Sequential()

# Adds a densely-connected layer with 64 units to the model:

model.add(layers.Dense(sequence_length, activation='relu', input_shape=(sequence_length,)))

# Add another:

model.add(layers.Dense(200, activation='relu'))

# Add a softmax layer with 10 output units:

model.add(layers.Dense(sequence_length))

loss_func = tf.keras.losses.MeanSquaredError()

opt_func = tf.keras.optimizers.Adam(0.01)

model.compile(optimizer= opt_func,

loss=loss_func,

metrics=['accuracy'])

full_mat, train_mat, test_mat = generate_train_test(10, 10)

model.fit(train_mat, test_mat, epochs=10, batch_size=2)

model.evaluate(train_mat, test_mat)

# test against freshly generated data

full_mat, train_mat, test_mat = generate_train_test(10, 10)

predict_mat = model.predict(train_mat)

show_plots = True

if show_plots:

plot_mats(full_mat, 10, "Full Data", 1)

plot_mats(train_mat, 10, "Input Vector", 2)

plot_mats(test_mat, 10, "Output Vector", 3)

plot_mats(predict_mat, 10, "Predict", 4)

plt.show()

7:00 – 4:00 ASRC GOES

ASRC GOES 7:00 – 5:30

7:00 – 2:30 ASRC GOES

7:00 – 4:30 ASRC GOES

7:00 –

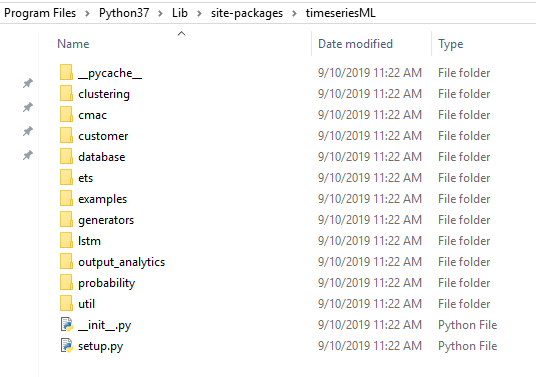

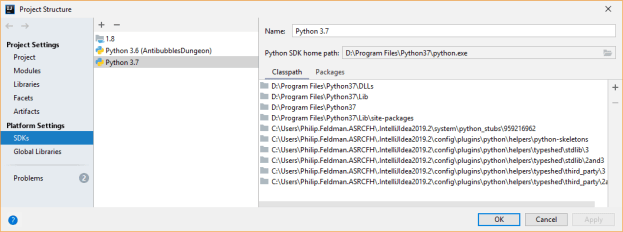

from tensorflow import kerasmade IntelliJ complain, but the python interpreter ran fine. I then installed keras, and IJ stopped complaining. Checking the version(s) seems to be identical, even though I can see that there is a new keras directory in D:\Program Files\Python37\Lib\site-packages. And we know that the interpreter and IDE are pointing to the same place:

"D:\Program Files\Python37\python.exe" D:/Development/Sandboxes/PyBullet/src/TensorFlow/HelloKeras.py 2019-09-05 11:30:04.694327: I tensorflow/stream_executor/platform/default/dso_loader.cc:44] Successfully opened dynamic library cudart64_100.dll tf.keras version = 2.2.4-tf keras version = 2.2.4-tf

from tensorflow.keras import layersI need to have:

from keras import layers

I mean, it works, but it’s weird and makes me think that something subtle may be busted…

You must be logged in to post a comment.