ACSOS

- Upload paper to Overleaf – done!

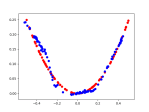

D20

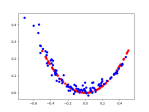

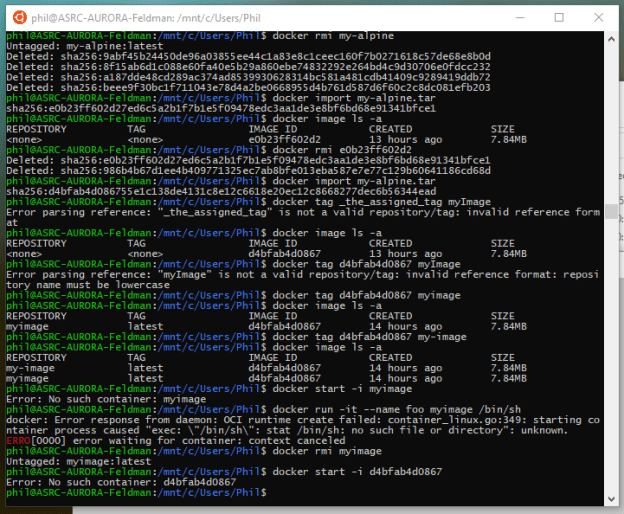

- Fix bug using this:

slope, intercept, r_value, p_value, std_err = stats.linregress(xsub, ysub) # slope, intercept = np.polyfit(x, y, 1) yn = np.polyval([slope, intercept], xsub) steps = 0 if slope < 0: steps = abs(y[-1] / slope) reg_x = [] reg_y = [] start = len(yl) - max_samples yval = intercept + slope * start for i in range(start, len(yl)-offset): reg_x.append(i) reg_y.append(yval) yval += slope - Anything else?

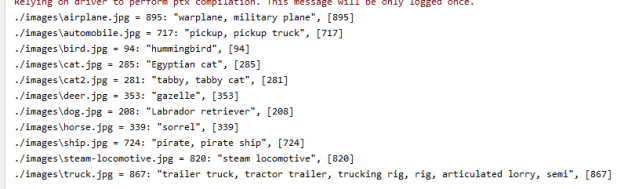

GPT-2 Agents

- Install and test GPT-2 Client

- Failed spectacularly. It depends on a lot of TF1.x items, like tensorflow.contrib.training. There is an issue request in.

- Checked out the project to see if anything could be done. “Fixed” the contrib library, but that just exposed other things. Uninstalled.

- Tried using the upgrade tool described here, which did absolutely nothing, as near as I can tell

GOES

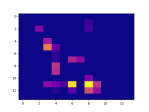

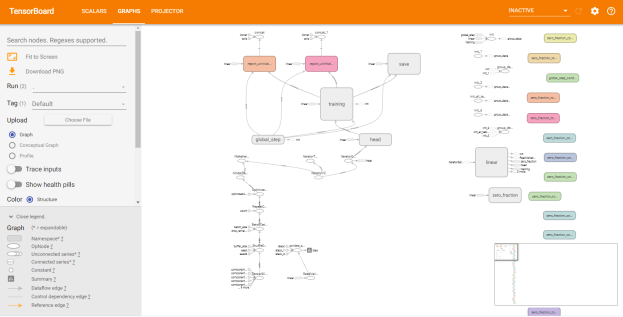

- Continue figuring out GANs

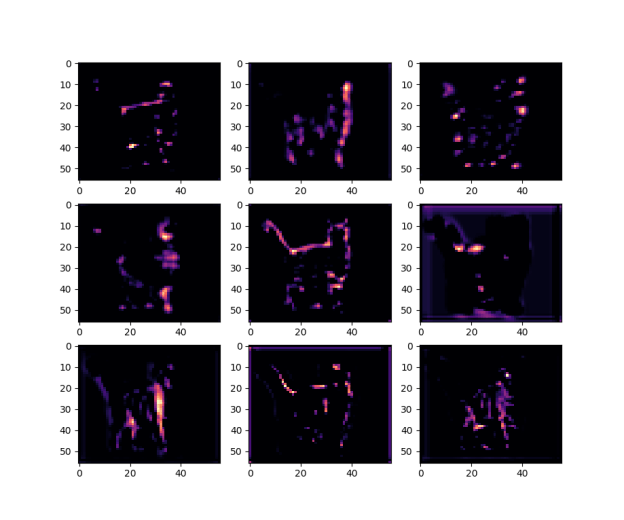

- Here are results using 2 latent dimensions, a matching hint, a line hint, and no hint

- Here are results using 5 latent dimensions, a matching hint, a line hint, and no hint

- Meeting at 10:00 with Vadim and Isaac

- Wound up going over Isaac’s notes for Yaw Flip and learned a lot. He’s going to see if he can get the algorithm used for the maneuver. If so, we can build the control behavior around that. The goal is to minimize energy and indirectly fuel costs

You must be logged in to post a comment.