One of the “fun” parts of working in ML for someone with a background in software development and not academic research is lots of hard problems remain unsolved. There are rarely defined ways things “must” be done, or in some cases even rules of thumb for doing something like implementing a production capable machine learning system for specific real world problems.

For most areas of software engineering, by the time it’s mature enough for enterprise deployment, it has long since gone through the fire and the flame of academic support, Fortune 50 R&D, and broad ground-level acceptance in the development community. It didn’t take long for distributed computing with Hadoop to be standardized for example. Web security, index systems for search, relational abstraction tiers, even the most volatile of production tier technology, the JavaScript GUI framework goes through periods of acceptance and conformity before most large organizations are trying to roll it out. It all makes sense if you consider the cost of migrating your company from a legacy Struts/EJB3.0 app running on Oracle to the latest HTML5 framework with a Hadoop backend. You don’t want to spend months (or years) investing in a major rewrite to find that its entirely out of date by your release. Organizations looking at these kinds of updates want an expectation of longevity for their dollar, so they invest in mature technologies with clear design rules.

There are companies that do not fall in this category for sure… either small companies who are more agile and can adopt a technology in the short term to retain relevance (or buzzword compliance), who are funded with external research dollars, or who invest money to stay pushing the bleeding edge. However, I think it’s fair to say, the majority of industry and federal customers are looking for stability and cost efficiency from solved technical problems.

Machine Learning is in the odd position of being so tremendously useful in comparison to prior techniques that companies who would normally wait for the dust to settle and development and deployment of these capabilities to become fully commoditized are dipping their toes in. I wrote in a previous post how a lot of the problems with implementing existing ML algorithms boils down to lifecyle, versioning, deployment, security etc., but there is another major factor which is model optimization.

Any engineer on the planet can download a copy of Keras/TensorFlow and a CSV of their organization’s data and smoosh them together until a number comes out. The problem comes when the number takes an eternity to output and is wrong. In addition to understanding the math that allows things like SGD to work for backpropogation or why certain activation functions are more effective in certain situations… one of the jobs for data scientists tuning DNN models is to figure out how to optimize the various buttons and knobs in the model to make it as accurate and performant as possible. Because a lot of this work *isn’t* a commodity yet, it’s a painful learning process of tweaking the data sets, adjusting model design or parameters and rerunning and comparing the results to try and find optimal answers without overfitting. Ironically the task data scientists are doing is one perfectly suited to machine learning. It’s no surprise to me that Google developed AutoML to optimize their own NN development.

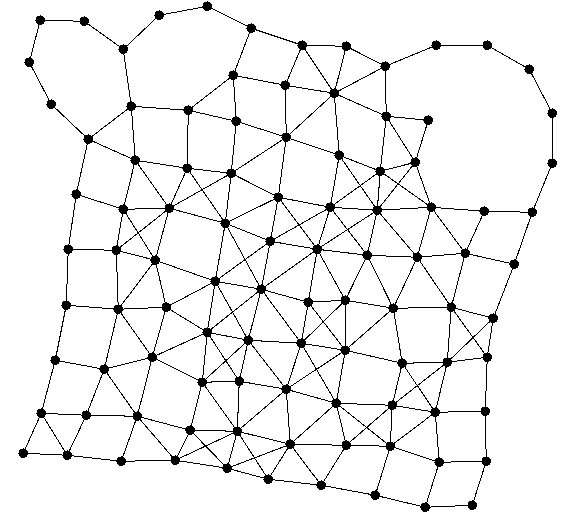

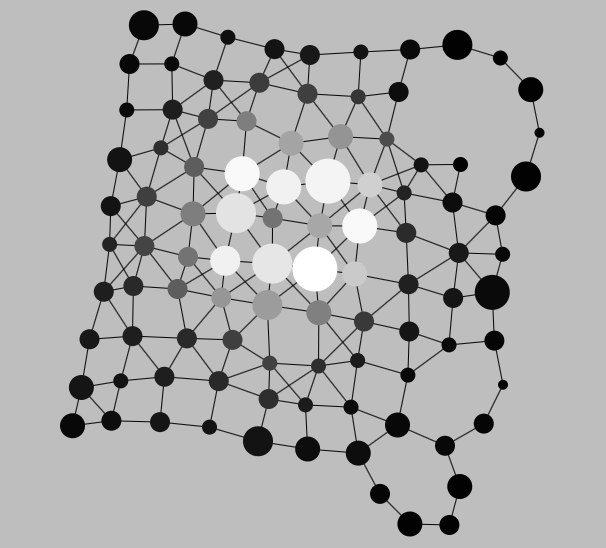

A number of months ago Phil and I worked on an unsupervised learning task related to organizing high dimensional agents in a medical space. These entities were complex “polychronic” patients with a wide variety of diagnosis and illness. Combined with fields for patient demographic data as well as their full medical claim history we came up with a method to group medically similar patients and look for statistical outliers for indicators of fraud, waste, and abuse. The results were extremely successful and resulted in a lot of recovered money for the customer, but the interesting thing technically was how the solution evolved. Our first prototype used a wide variety of clustering algorithms, value decompositions, non-negative matrix factorization, etc looking for optimal results. All of the selections and subsequent hyperparameters had to be modified by hand, the results evaluated, and further adjustments made.

When it became clear that the results were very sensitive to tiny adjustments, it was obvious that our manual tinkering would miss obvious gradient changes and we implemented an optimizer framework which could evaluate manifold learning techniques for stability and reconstruction error, and the results of the reduction clustered using either a complete fitness landscape walk, a genetic algorithm, or a sub-surface division.

While working on tuning my latest test LSTM for time series prediction, I realized we’re dealing with the same issue here. There is no hard and fast rule for questions like, “How many LSTM Layers should my RNN have?” or “How many LSTM Units should each layer have?”, “What loss function and optimizer work best for this type of data?”, “How much dropout should I apply?”, “Should I use peepholes?”

I kept finding articles during my work saying things like, “There are diminishing returns for more than 4 stacked LSTM layers”. That’s an interesting rule of thumb… what is it based on? The author’s intuition based on the data sets for the particular problems they were experiencing presumably. Some rules of thumb attempted to generate a mathematical relationship between the input data size and complexity and the optimal layout of layers and units. This StackOverflow question has some great responses: https://stackoverflow.com/questions/35520587/how-to-determine-the-number-of-layers-and-nodes-of-a-neural-network

A method recommended by Geoff Hinton is to add layers until you start to overfit your training set. Then you add dropout or another regularization method.

Because so much of what Phil and I do tends towards the generic repeatable solution for real world problems, I suspect we’ll start with some “common wisdom heuristics” and rapidly move towards writing a similar optimizer for supervised problems.

You must be logged in to post a comment.