Part-Based Models Improve Adversarial Robustness

- We show that combining human prior knowledge with end-to-end learning can improve the robustness of deep neural networks by introducing a part-based model for object classification. We believe that the richer form of annotation helps guide neural networks to learn more robust features without requiring more samples or larger models. Our model combines a part segmentation model with a tiny classifier and is trained end-to-end to simultaneously segment objects into parts and then classify the segmented object. Empirically, our part-based models achieve both higher accuracy and higher adversarial robustness than a ResNet-50 baseline on all three datasets. For instance, the clean accuracy of our part models is up to 15 percentage points higher than the baseline’s, given the same level of robustness. Our experiments indicate that these models also reduce texture bias and yield better robustness against common corruptions and spurious correlations. The code is publicly available at this https URL.

- Named Tensors allow users to give explicit names to tensor dimensions. In most cases, operations that take dimension parameters will accept dimension names, avoiding the need to track dimensions by position. In addition, named tensors use names to automatically check that APIs are being used correctly at runtime, providing extra safety. Names can also be used to rearrange dimensions, for example, to support “broadcasting by name” rather than “broadcasting by position”.

SBIRs

- 9:00 Sprint review – done

- Added stories for next sprint

- 2:00 MDA Meeting – done. Loren’s sick

- Set up overleaf for Q3 – done

- Go to DC for forum

GPT Agents

- Load up laptop

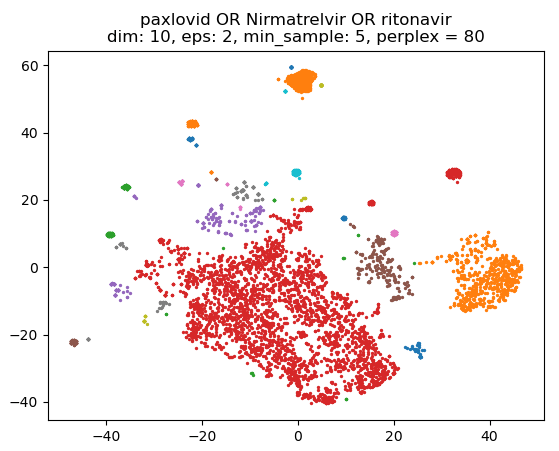

- Get some more done on embedding? Yup, split out each step so that changing clustering (very fast) doesn’t have to wait for loading and manifold reduction

- Save everything back to the DB and make sure the reduced embeddings and clusters are loaded if available

Book

- 4:00 Meeting