7:00 – 3:30 ASRC GOES

- Dissertation – Done with Human Study!

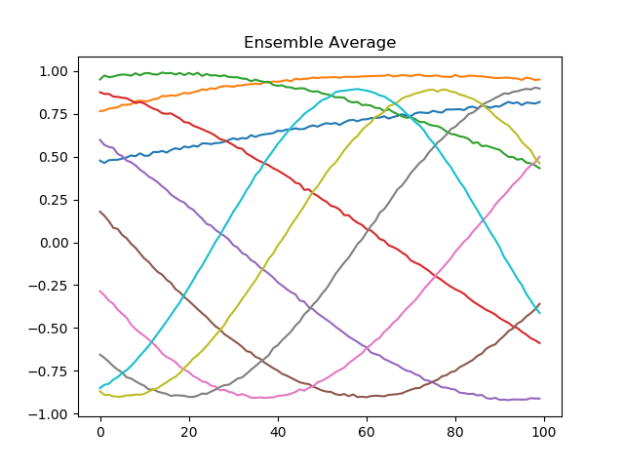

- Evolver

-

- Work on parameter passing and function storing

- You can use the

*operator before an iterable to expand it within the function call. For example:timeseries_list = [timeseries1 timeseries2 ...] r = scikits.timeseries.lib.reportlib.Report(*timeseries_list) - Here’s the running code with variable arguments

def plus_func(v1:float, v2:float) -> float: return v1 + v2 def minus_func(v1:float, v2:float) -> float: return v1 - v2 def mult_func(v1:float, v2:float) -> float: return v1 * v2 def div_func(v1:float, v2:float) -> float: return v1 / v2 if __name__ == '__main__': func_array = [plus_func, minus_func, mult_func, div_func] vf = EvolveAxis("func", ValueAxisType.FUNCTION, range_array=func_array) v1 = EvolveAxis("X", ValueAxisType.FLOAT, parent=vf, min=-5, max=5, step=0.25) v2 = EvolveAxis("Y", ValueAxisType.FLOAT, parent=vf, min=-5, max=5, step=0.25) for f in func_array: result = vf.get_random_val() print("------------\nresult = {}\n{}".format(result, vf.to_string())) - And here’s the output

------------ result = -1.0 func: cur_value = div_func X: cur_value = -1.75 Y: cur_value = 1.75 ------------ result = -2.75 func: cur_value = plus_func X: cur_value = -0.25 Y: cur_value = -2.5 ------------ result = 3.375 func: cur_value = mult_func X: cur_value = -0.75 Y: cur_value = -4.5 ------------ result = -5.0 func: cur_value = div_func X: cur_value = -3.75 Y: cur_value = 0.75

- Now I need to get this to work with different functions with different arg lists. I think I can do this with an EvolveAxis containing a list of EvolveAxis with functions. Done, I think. Here’s what the calling code looks like:

# create a set of functions that all take two arguments func_array = [plus_func, minus_func, mult_func, div_func] vf = EvolveAxis("func", ValueAxisType.FUNCTION, range_array=func_array) v1 = EvolveAxis("X", ValueAxisType.FLOAT, parent=vf, min=-5, max=5, step=0.25) v2 = EvolveAxis("Y", ValueAxisType.FLOAT, parent=vf, min=-5, max=5, step=0.25) # create a single function that takes no arguments vp = EvolveAxis("random", ValueAxisType.FUNCTION, range_array=[random.random]) # create a set of Axis from the previous function evolve args axis_list = [vf, vp] vv = EvolveAxis("meta", ValueAxisType.VALUEAXIS, range_array=axis_list) # run four times for i in range(4): result = vv.get_random_val() print("------------\nresult = {}\n{}".format(result, vv.to_string())) - Here’s the output. The random function has all the decimal places:

------------ result = 0.03223958125899473 meta: cur_value = 0.8840652389671935 ------------ result = -0.75 meta: cur_value = -0.75 ------------ result = -3.5 meta: cur_value = -3.5 ------------ result = 0.7762888191296017 meta: cur_value = 0.13200324934487906

- Verified that everything still works with the EvolutionaryOptimizer. Now I need to make sure that the new mutations include these new dimensions

-

- I think I should also move TF2OptimizationTestBase to TimeSeriesML2?

- Starting Human Compatible

You must be logged in to post a comment.