7:00 – 4:00 ASRC

- Dissertation – starting the maps section

- Need to finish the financial OODA loop section

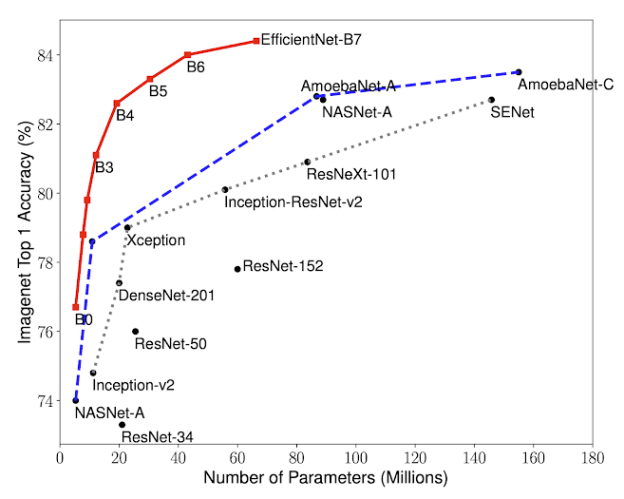

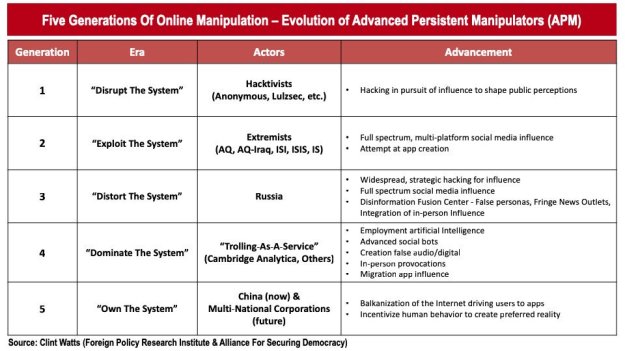

- Spending the day at a Navy-sponsored miniconference on AI, ethics and the military (no wifi at Annapolis, so I’ll put up notes later). This was an odd mix of higher-level execs in suits, retirees, and midshipmen, with a few technical folks sprinkled in. It is clear that for these people, the technology(?) is viewed as AI/ml. The idea that AI is a thing that we don’t do yet does not emerge at this level. Rather, AI is being implemented using machine learning, and in particular deep learning.

You must be logged in to post a comment.