Safer is a supertanker in advanced state of decay that will break apart or explode if the world does not act. The result will be an environmental and humanitarian catastrophe centered on the coast of a country already devastated by seven years of war and affecting the entire region. The UN is ready to stage an emergency operation to address this threat, but work will only begin when we have the necessary funds.

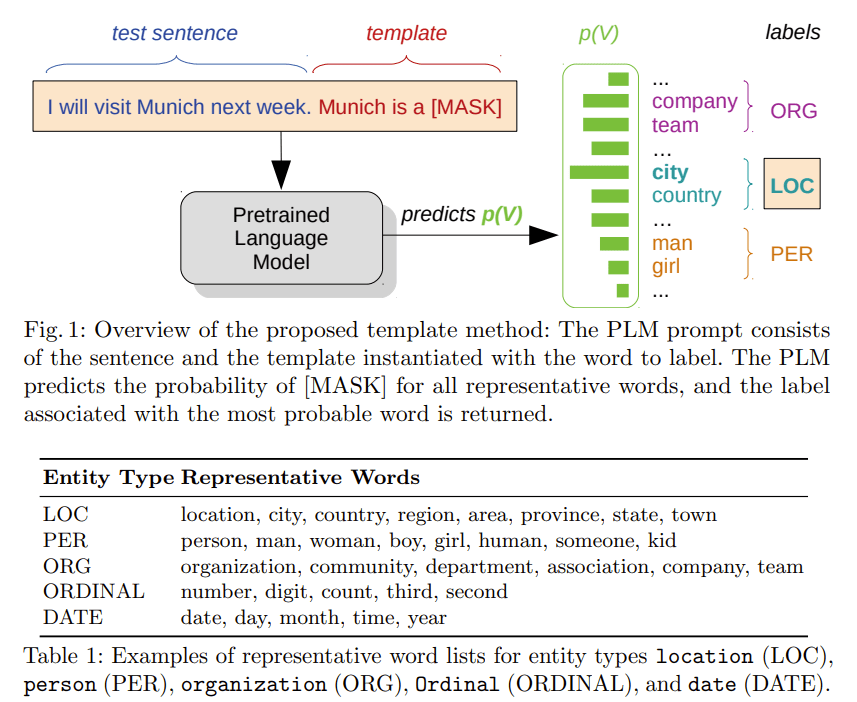

TOKEN is a MASK: Few-shot Named Entity Recognition with Pre-trained Language Models

- Transferring knowledge from one domain to another is of practical importance for many tasks in natural language processing, especially when the amount of available data in the target domain is limited. In this work, we propose a novel few-shot approach to domain adaptation in the context of Named Entity Recognition (NER). We propose a two-step approach consisting of a variable base module and a template module that leverages the knowledge captured in pre-trained language models with the help of simple descriptive patterns. Our approach is simple yet versatile and can be applied in few-shot and zero-shot settings. Evaluating our lightweight approach across a number of different datasets shows that it can boost the performance of state-of-the-art baselines by 2-5% F1-score.

Book

- Finish proposal? Yes!

- Gita Manaktala (Information Science and Communication editor) oversees the MIT Press’s book acquisitions and works closely with our other editors. She acquires her own list of books in the areas of information science, communication, and internet studies. Her interests include networked communication, news and information, privacy, data security, and access to knowledge.

- Katie Helke | Editor: I acquire trade books, professional books, crossover books, and (very occasionally) textbooks. Head here if you’d like to learn more about those different book types and some other random publishing stuff that may or may not be useful to you. Head here if you’d like to learn more about the MIT Press, its history, and some of its current initiatives.

GPT-Agents

- Continue working on balanced pull. I think I finally got the math right

SBIRs

- Demo slides