Language modeling via stochastic processes

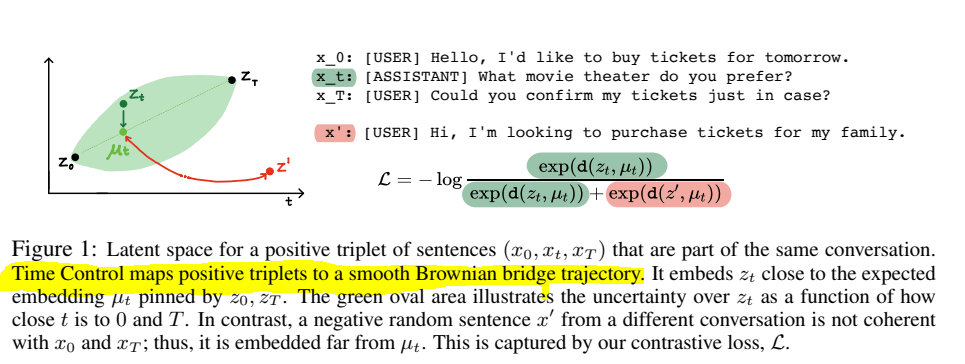

- Modern language models can generate high-quality short texts. However, they often meander or are incoherent when generating longer texts. These issues arise from the next-token-only language modeling objective. To address these issues, we introduce Time Control (TC), a language model that implicitly plans via a latent stochastic process. TC does this by learning a representation which maps the dynamics of how text changes in a document to the dynamics of a stochastic process of interest. Using this representation, the language model can generate text by first implicitly generating a document plan via a stochastic process, and then generating text that is consistent with this latent plan. Compared to domain-specific methods and fine-tuning GPT2 across a variety of text domains, TC improves performance on text infilling and discourse coherence. On long text generation settings, TC preserves the text structure both in terms of ordering (up to +40% better) and text length consistency (up to +17% better). Human evaluators also prefer TC’s output 28.6% more than the baselines.

Book

- Finished(?) definitions

- Moved “Interview with a Biased Machine” to the beginning of the Practice section. Going to work on that next

SBIRs

- Get the lit review slides together for after the standup – done!

- 9:15 standup

- More code generation

- Finish breaking bdmon into a class. As I do this, I think that it might make sense to have two directories – the directory that contains the editable child classes and a directory under that one that contains the generated files that are created each time the tool runs. Done!. This would allow the BoardMonitor class to have a decision_process() method that gets overridden easily in a child class. Next.

- Dynamically calculate the import lib.

- Wire up the run and step buttons

- Terminate() should write things out? Done

- Meeting with Ron about Crossentropy

GPT Agents

- Figured out how to start find the Kuali IRB process and got some things down. Will need to walk through some things at the 3:30