Looking forward to 2.22.22. Almost as exciting as 11.11.11

This IS VERY COOL!! It’s an entire book written using Jupyter Notebooks that you can read on github: GitHub – fastai/fastbook: The fastai book, published as Jupyter Notebooks

GPT Agents.

- Got the counts query working with only a small amount of googling. The cool thing is that the items come back with a granularity, so this call (which has a default granularity of “day”:

query = "from:twitterdev"

start_time = "2021-05-01T00:00:00Z"

end_time = "2021-06-01T00:00:00Z"

url = create_counts_url(query, start_time, end_time)

json_response = connect_to_endpoint(url)

print_response("Get counts", json_response)

- returns a json object that has the daily volume of tweets from @twitterdev (There were 24 total time periods, and then the total tweet count was 22) :

response:

{

"data": [

{

"end": "2021-05-02T00:00:00.000Z",

"start": "2021-05-01T00:00:00.000Z",

"tweet_count": 0

},

{

"end": "2021-05-13T00:00:00.000Z",

"start": "2021-05-12T00:00:00.000Z",

"tweet_count": 6

},

{

"end": "2021-05-14T00:00:00.000Z",

"start": "2021-05-13T00:00:00.000Z",

"tweet_count": 1

},

{

"end": "2021-05-15T00:00:00.000Z",

"start": "2021-05-14T00:00:00.000Z",

{

"end": "2021-05-21T00:00:00.000Z",

"start": "2021-05-20T00:00:00.000Z",

"tweet_count": 8

},

{

"end": "2021-05-29T00:00:00.000Z",

"start": "2021-05-28T00:00:00.000Z",

"tweet_count": 2

},

{

"end": "2021-06-01T00:00:00.000Z",

"start": "2021-05-31T00:00:00.000Z",

"tweet_count": 0

}

],

"meta": {

"total_tweet_count": 22

}

}

- This is very nice! I’m looking forward to doing some interesting things with the GPT. We can scan through responses to prompts and look at word-by-word Twitter frequencies after stop words, and then use those sentences for further prompting. We can also compare embeddings, cluster and other interesting things

SBIRs

- Working through Natural Language Processing with Transformers

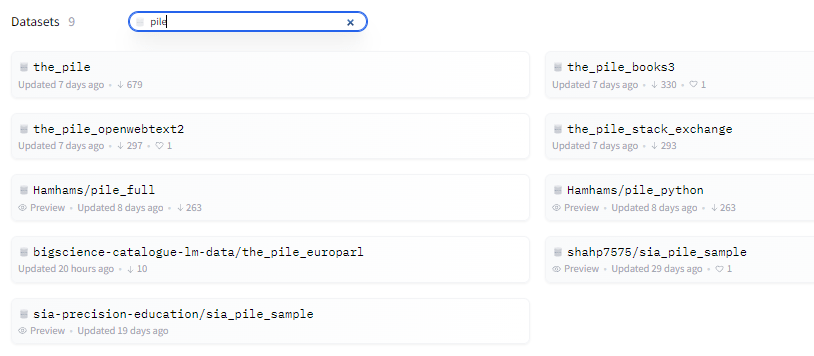

- Very nice. You can easily get training data here

- Datasets simplifies this process by providing a standard interface for thousands of datasets that can be found on the Hub. It also provides smart caching (so you don’t have to redo your preprocessing each time you run your code)and avoids RAM limitations by leveraging a special mechanism called memory mapping that stores the contents of a file in virtual memory and enables multiple processes to modify a file more efficiently.

- Imbalanced-learn (imported as imblearn) is an open source, MIT-licensed library relying on scikit-learn (imported as sklearn) and provides tools when dealing with classification with imbalanced classes.

- Nice NW job fair

Ack! Dreamhost has deleted my SVN repo. Very bad. Working on getting it back. Other options include RiouxSVN, but it may be moribund. Assembla hosts for $19/month with 500 GB, which is good because I store models. Alternatively, make a svn server, fix the IP address, and have it on Google Drive, OneDrive, or DropBox.