Pushshift will soon provide API endpoints to do audio to text transcribing (at a combined aggregate rate of 10 minutes of audio per second). The upcoming API will also have convenience methods where you can provide a link to a youtube video and..

Welcome to OpenNRE’s documentation!

SBIR(s)

- 9:15 IRAD Standup

- Need to get started on the slides for the Huntsville talk

- 10:30 LAIC followup

- 5:30 – 6:00 Phase II meeting

GPT Agents

- Validating the 100, 50, 25, 12, and 6 models

- 100 and 50 look fine

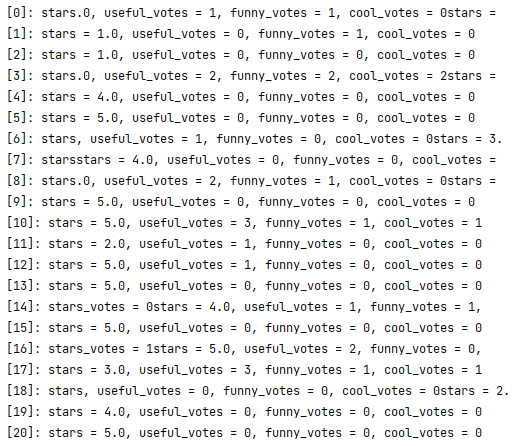

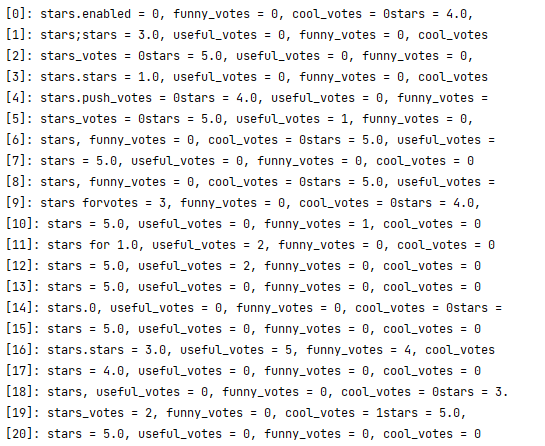

- 25 starts to have problems, so the parser is going to have to be a bit better:

- 12 looks about as bad:

- 6 may look a little worse? I’ll write the parser to handle this version:

- This makes me think that 150k – 250k lines may be a sweet spot for best accuracy with the least data. It may actually be possible to figure out a regression that will tell us this

- Starting on the parser. I’ll need to take the first of each. If there is no value, use zero.

line_regex = re.compile(r"\w+[,|.|=|(|)|:| ]+\d+")

key_val_regex = re.compile(r"\w+")

- Starting the run that creates synthetic entries for all the models – done

4:00 – 6:00 Arpita’s defense! Looks like she did a great job. It makes me wonder whether larger databases with features that indicate where data came from for multiple platforms could be better than separate smaller models for each platform. In the satellite anomaly detection systems I build, we typically train one model per vehicle. This work implies that it might be better to have one model for all vehicles with domain knowledge about each vehicle included in the feature vector