Here are some silly coding conventions that I had to dig to find the answers for.

- First, when making a call to https://wikimedia.org/api/rest_v1 using requests, you need to set the call like this:

headers = {"User-Agent": "someone@someplace.com"}

page_title = "Exergaming"

yesterday = datetime.today() - timedelta(days=1)

last_week = yesterday - timedelta(days=7)

yester_s = yesterday.strftime("%Y%m%d")

lastw_s = last_week.strftime("%Y%m%d")

s = "https://wikimedia.org/api/rest_v1/metrics/pageviews/per-article/en.wikipedia/all-access/user/{}/daily/{}/{}".format(page_title, lastw_s, yester_s)

print(s)

r = requests.get(s, headers=headers)

- Without that ‘headers’ element, you get a 404. Note that you do not need to spoof a browser header. This is all you need.

The second thing has to do with getting strings safely into databases

- , when storing values with pymysql that involves strings that need to be escaped, you can now use parameter binding, which is very cool. BUT! Just because it uses ‘%s’, doesn’t mean that you use %d and %f. Here’s an example that uses strings, floats, and ints:

sql = "insert into gpt_maps.table_experiment (date, description, engine, max_tokens, temperature, top_p, logprobs, num_responses, presence_penalty, frequency_penalty)" \

" values(%s, %s, %s, %s, %s, %s, %s, %s, %s, %s)"

values = (date_str, description, self.engine, self.max_tokens, self.temperature, self.top_p, self.logprobs, self.num_responses, self.presence_penalty, self.frequency_penalty)

msi.write_sql_values_get_row(sql, values)

And here’s the call that does the actual writing to the db:

def write_sql_values_get_row(self, sql:str, values:Tuple):

try:

with self.connection.cursor() as cursor:

cursor.execute(sql, values)

id = cursor.lastrowid

print("row id = {}".format(id))

return id

except pymysql.err.InternalError as e:

print("{}:\n\t{}".format(e, sql))

return -1

The Power of Scale for Parameter-Efficient Prompt Tuning

- In this work, we explore “prompt tuning”, a simple yet effective mechanism for learning “soft prompts” to condition frozen language models to perform specific downstream tasks. Unlike the discrete text prompts used by GPT-3, soft prompts are learned through backpropagation and can be tuned to incorporate signal from any number of labeled examples. Our end-to-end learned approach outperforms GPT-3’s “few-shot” learning by a large margin. More remarkably, through ablations on model size using T5, we show that prompt tuning becomes more competitive with scale: as models exceed billions of parameters, our method “closes the gap” and matches the strong performance of model tuning (where all model weights are tuned). This finding is especially relevant in that large models are costly to share and serve, and the ability to reuse one frozen model for multiple downstream tasks can ease this burden. Our method can be seen as a simplification of the recently proposed “prefix tuning” of Li and Liang (2021), and we provide a comparison to this and other similar approaches. Finally, we show that conditioning a frozen model with soft prompts confers benefits in robustness to domain transfer, as compared to full model tuning.

GPT-Agents

- Start building out GraphToDB.

- Use the Wikipedia to verify a node name exists before adding it

- Check that a (directed) edge exists before adding it. If it does, increment the weight.

- Digging into what metaphors are:

- Understanding Figurative Language: From Metaphor to Idioms

- This book examines how people understand utterances that are intended figuratively. Traditionally, figurative language such as metaphors and idioms has been considered derivative from more complex than ostensibly straightforward literal language. Glucksberg argues that figurative language involves the same kinds of linguistic and pragmatic operations that are used for ordinary, literal language. Glucksberg’s research in this book is concerned with ordinary language: expressions that are used in daily life, including conversations about everyday matters, newspaper and magazine articles, and the media. Metaphor is the major focus of the book. Idioms, however, are also treated comprehensively, as is the theory of conceptual metaphor in the context of how people understand both conventional and novel figurative expressions. A new theory of metaphor comprehension is put forward, and evaluated with respect to competing theories in linguistics and in psychology. The central tenet of the theory is that ordinary conversational metaphors are used to create new concepts and categories. This process is spontaneous and automatic. Metaphor is special only in the sense that these categories get their names from the best examples of the things they represent, and that these categories get their names from the best examples of those categories. Thus, the literal “shark” can be a metaphor for any vicious and predatory being, from unscrupulous salespeople to a murderous character in The Threepenny Opera. Because the same term, e.g.,”shark,” is used both for its literal referent and for the metaphorical category, as in “My lawyer is a shark,” we call it the dual-reference theory. The theory is then extended to two other domains: idioms and conceptual metaphors. The book presents the first comprehensive account of how people use and understand metaphors in everyday life

- The contemporary theory of metaphor — now new and improved!

- This paper outlines a multi-dimensional/multi-disciplinary framework for the study of metaphor. It expands on the cognitive linguistic approach to metaphor in language and thought by adding the dimension of communication, and it expands on the predominantly linguistic and psychological approaches by adding the discipline of social science. This creates a map of the field in which nine main areas of research can be distinguished and connected to each other in precise ways. It allows for renewed attention to the deliberate use of metaphor in communication, in contrast with non-deliberate use, and asks the question whether the interaction between deliberate and non-deliberate use of metaphor in specific social domains can contribute to an explanation of the discourse career of metaphor. The suggestion is made that metaphorical models in language, thought, and communication can be classified as official, contested, implicit, and emerging, which may offer new perspectives on the interaction between social, psychological, and linguistic properties and functions of metaphor in discourse.

- Understanding Figurative Language: From Metaphor to Idioms

SBIR

- 10:00 Meeting

- See how the new models are doing. If we are still not making progress, then go to a simpler interpolation model

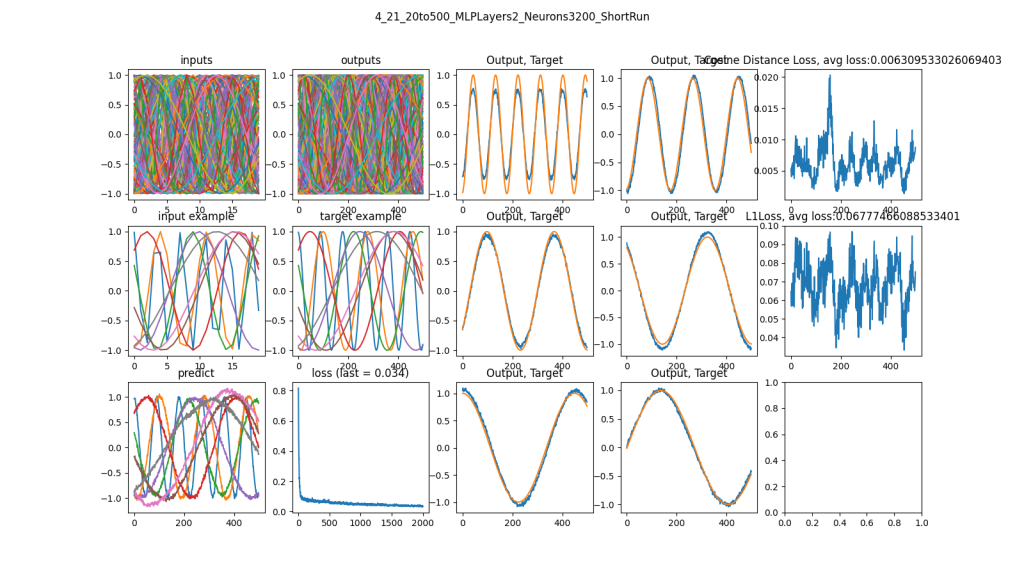

- It turns out that the frequency problem was actually a visualization bug! Here’s an example going from 20 input vectors to 500 output vectors using attention and 2 3,000 perceptron layers: