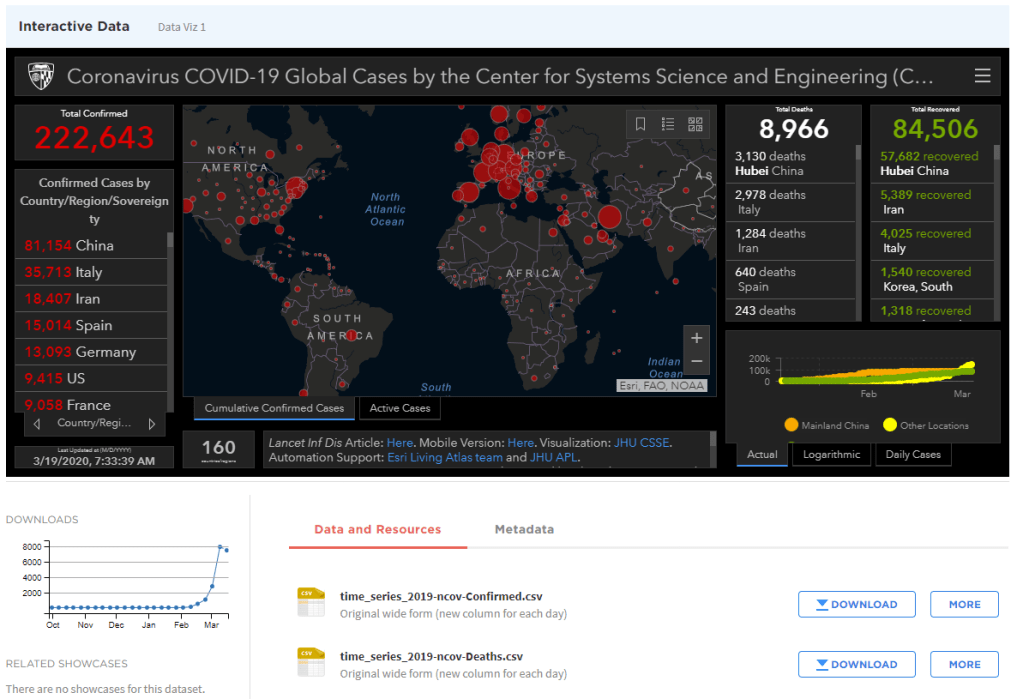

I found the data sources for the dashboard in the previous few posts. Yes, everything still looks grim:

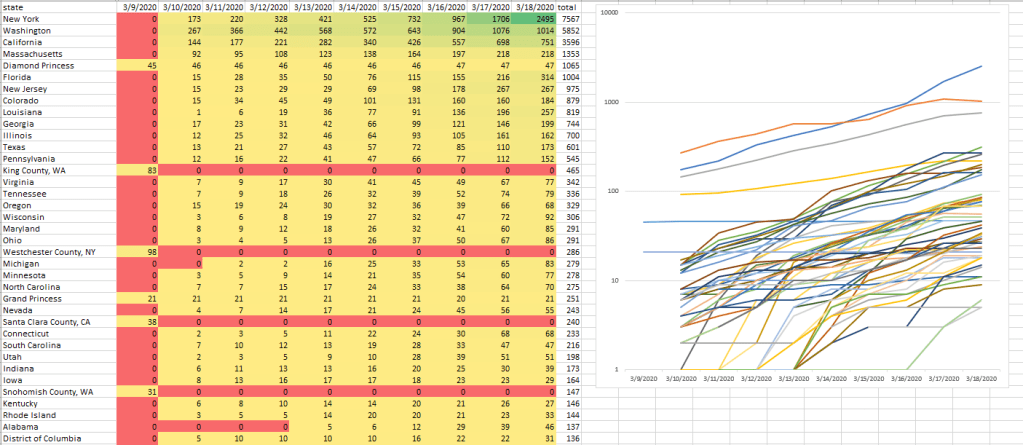

So rather than working on my dissertation, I thought I’d take a look at the data for the last 9(!) days in Excel:

This is for the USA. The data is sorted based on the cumulative total of new cases confirmed. If you look at the chart on the right, everything is in line with a pandemic in exponential growth. However, that’s not the whole story.

I like to color code the cells in my spreadsheets because colors help me visualize patterns in the data that I wouldn’t otherwise see. And one of the things that really stands out here is the red rows with one yellow cell on the left. These are all cases where the rate of confirmed new cases dropped to zero overnight. And they’re not near each other. They are in WA, NY, and CA. Is this a measuring problem or is something going right in these places?

Maybe we’ll find out more in the next few days. Now that I know how to get the data, I can do some of my own visualizations that look for outliers. I can also train up some sequence-to-sequence ML models to extrapolate trends.

One more thing. I had heard earlier (Twitter, I think?) that Vietnam was handling the crisis well. And it looks like it was, but things are back to being bad:

Ok, back to work

8:00 – 4:30 ASRC PhD, GOES

- Working on the process section – done!

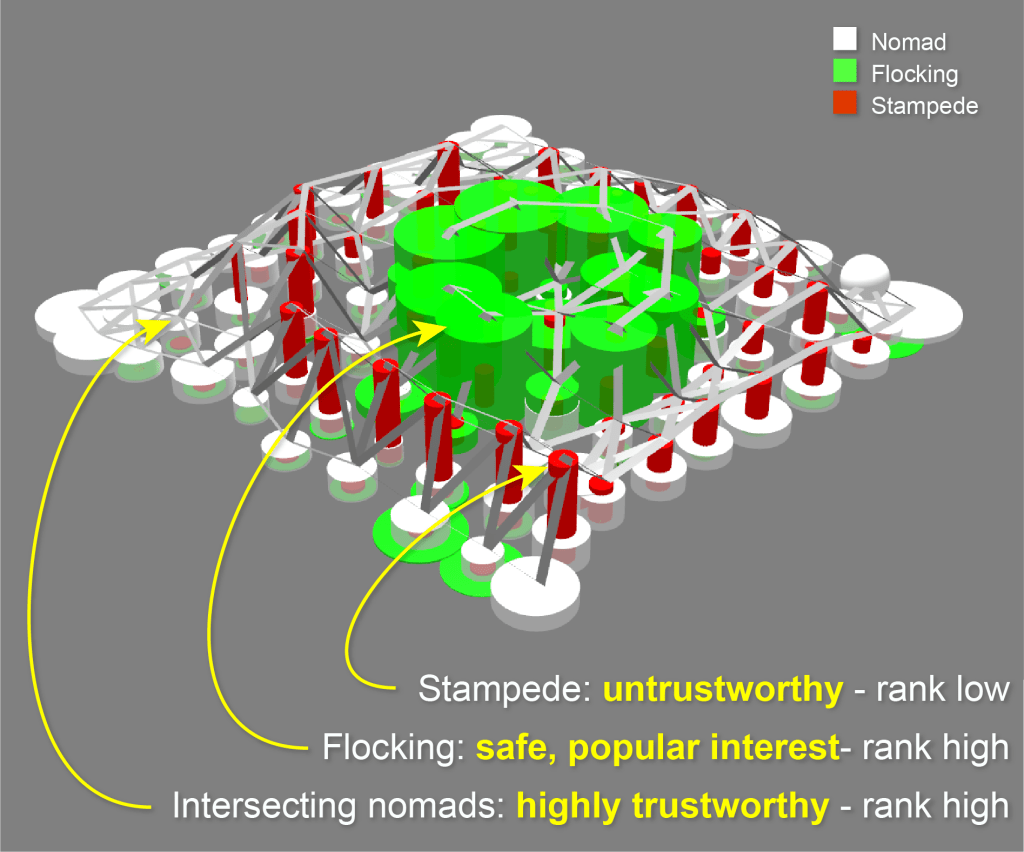

- Working on the TACJ bookend – done! Made a new figure:

- Submitted to Wayne. Here’s hoping it doesn’t fall through the cracks

- Neuroevolution of Self-Interpretable Agents

- Inattentional blindness is the psychological phenomenon that causes one to miss things in plain sight. It is a consequence of the selective attention in perception that lets us remain focused on important parts of our world without distraction from irrelevant details. Motivated by selective attention, we study the properties of artificial agents that perceive the world through the lens of a self-attention bottleneck. By constraining access to only a small fraction of the visual input, we show that their policies are directly interpretable in pixel space. We find neuroevolution ideal for training self-attention architectures for vision-based reinforcement learning tasks, allowing us to incorporate modules that can include discrete, non-differentiable operations which are useful for our agent. We argue that self-attention has similar properties as indirect encoding, in the sense that large implicit weight matrices are generated from a small number of key-query parameters, thus enabling our agent to solve challenging vision based tasks with at least 1000x fewer parameters than existing methods. Since our agent attends to only task-critical visual hints, they are able to generalize to environments where task irrelevant elements are modified while conventional methods fail.