ASRC PhD 7:00 –

- The difference between “more” (low dimension stampede-ish), and “enough” (grounded and comparative) – from Rebuilding the Social Contract, Part 2

- Dissertation – finished Limitations!

- GPT-2

- Having installed all the transformers-related librarues, I’m testing the evolver to see if it still works. Woohoo! Onward

- Is this good? It seems to have choked on the Torch examples, which makes sense

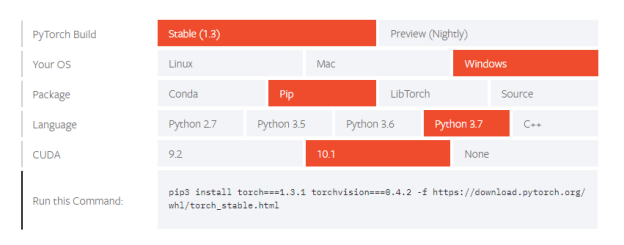

D:\Development\Sandboxes\transformers>make test-examples python -m pytest -n auto --dist=loadfile -s -v ./examples/ ================================================= test session starts ================================================= platform win32 -- Python 3.7.4, pytest-5.3.2, py-1.8.0, pluggy-0.13.1 -- D:\Program Files\Python37\python.exe cachedir: .pytest_cache rootdir: D:\Development\Sandboxes\transformers plugins: forked-1.1.3, xdist-1.31.0 [gw0] win32 Python 3.7.4 cwd: D:\Development\Sandboxes\transformers [gw1] win32 Python 3.7.4 cwd: D:\Development\Sandboxes\transformers [gw2] win32 Python 3.7.4 cwd: D:\Development\Sandboxes\transformers [gw3] win32 Python 3.7.4 cwd: D:\Development\Sandboxes\transformers [gw4] win32 Python 3.7.4 cwd: D:\Development\Sandboxes\transformers [gw5] win32 Python 3.7.4 cwd: D:\Development\Sandboxes\transformers [gw6] win32 Python 3.7.4 cwd: D:\Development\Sandboxes\transformers [gw7] win32 Python 3.7.4 cwd: D:\Development\Sandboxes\transformers [gw0] Python 3.7.4 (tags/v3.7.4:e09359112e, Jul 8 2019, 20:34:20) [MSC v.1916 64 bit (AMD64)] [gw1] Python 3.7.4 (tags/v3.7.4:e09359112e, Jul 8 2019, 20:34:20) [MSC v.1916 64 bit (AMD64)] [gw2] Python 3.7.4 (tags/v3.7.4:e09359112e, Jul 8 2019, 20:34:20) [MSC v.1916 64 bit (AMD64)] [gw3] Python 3.7.4 (tags/v3.7.4:e09359112e, Jul 8 2019, 20:34:20) [MSC v.1916 64 bit (AMD64)] [gw4] Python 3.7.4 (tags/v3.7.4:e09359112e, Jul 8 2019, 20:34:20) [MSC v.1916 64 bit (AMD64)] [gw5] Python 3.7.4 (tags/v3.7.4:e09359112e, Jul 8 2019, 20:34:20) [MSC v.1916 64 bit (AMD64)] [gw6] Python 3.7.4 (tags/v3.7.4:e09359112e, Jul 8 2019, 20:34:20) [MSC v.1916 64 bit (AMD64)] [gw7] Python 3.7.4 (tags/v3.7.4:e09359112e, Jul 8 2019, 20:34:20) [MSC v.1916 64 bit (AMD64)] gw0 [0] / gw1 [0] / gw2 [0] / gw3 [0] / gw4 [0] / gw5 [0] / gw6 [0] / gw7 [0] scheduling tests via LoadFileScheduling ======================================================= ERRORS ======================================================== _____________________________________ ERROR collecting examples/test_examples.py ______________________________________ ImportError while importing test module 'D:\Development\Sandboxes\transformers\examples\test_examples.py'. Hint: make sure your test modules/packages have valid Python names. Traceback: examples\test_examples.py:23: in import run_generation examples\run_generation.py:25: in import torch E ModuleNotFoundError: No module named 'torch' _________________________ ERROR collecting examples/summarization/test_utils_summarization.py _________________________ ImportError while importing test module 'D:\Development\Sandboxes\transformers\examples\summarization\test_utils_summarization.py'. Hint: make sure your test modules/packages have valid Python names. Traceback: examples\summarization\test_utils_summarization.py:18: in import torch E ModuleNotFoundError: No module named 'torch' ================================================== 2 errors in 1.57s ================================================== make: *** [test-examples] Error 1 - Hmm. run_generation.py seems to need Torch. This sets of a whole bunch of issues. First, installing Torch from here provides a cool little tool to determine what to install:

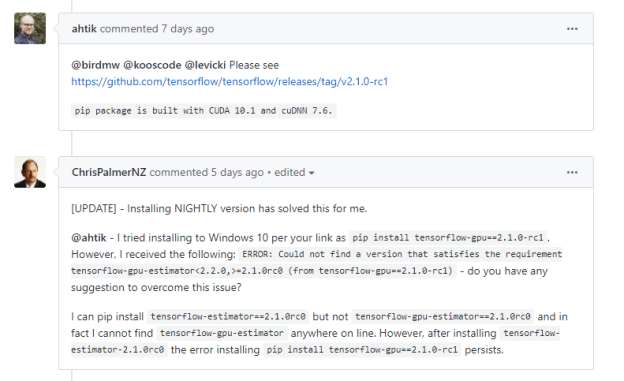

- Note that the available version of CUDA are 9.2 and 10.0. This is a problem, because at the moment, TF only works with 10.0. Mostly because the user community hates upgrading drivers?

- That being said, it may be true that the release candidate TF is using CUDA 10.1:

- I think I’m going to wait until Aaron shows up to decide if I want to jump down this rabbit hole. In the meantime, I’m going to look at other TF implementations of the GPT-2. Also, the actual use of Torch seems pretty minor, so maybe it’s avoidable?

- It appears to be just this method

def set_seed(args): np.random.seed(args.seed) torch.manual_seed(args.seed) if args.n_gpu > 0: torch.cuda.manual_seed_all(args.seed) - And the code that calls it

args.device = torch.device("cuda" if torch.cuda.is_available() and not args.no_cuda else "cpu") args.n_gpu = torch.cuda.device_count() set_seed(args)

- It appears to be just this method

- Aaron suggest using a previous version of torch that is compatible with CUDA 10.0. All the previous versions are here, and this is the line that should work (huggingface transformers’ ” repo is tested on Python 3.5+, PyTorch 1.0.0+ and TensorFlow 2.0.0-rc1“):

pip install torch==1.2.0 torchvision==0.4.0 -f https://download.pytorch.org/whl/torch_stable.html