Crap – It’s October already!

7:00 – 4:00 ASRC GOES

- Unsupervised Thinking – a podcast about neuroscience, artificial intelligence and science more broadly

- Dissertation

- Cleanup of most of the sections up to and through the terms part of the Theory section.

- Fix problem with the fitness values? Also, save the best chromosome always. Fixed I think. Testing.

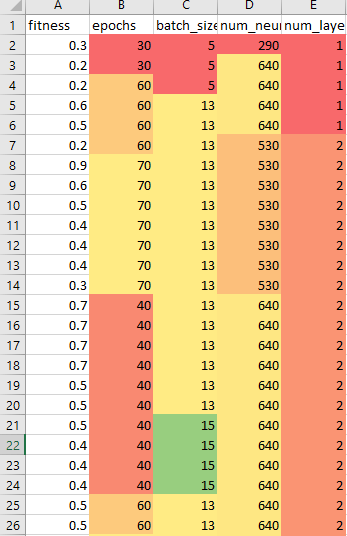

- So there’s a big problem, which I kind of knew about. The random initialization of weights makes a HUGE difference in the performance of the model. I discovered this while looking at the results of the evolver, which saves the best of each generation and saves them out to a spreadsheet:

-

- If you look at row 8, you see a lovely fitness of 0.9, or 90%. Which was the best value from the evolver runs. However, after sorting on the parameters so that they were grouped, it became obvious that there is a HUGE variance in the results. The lowest fitness is 30%, and the average fitness for those values is actually 50%. I tried running the parameters on multiple trained models and got results that agree. These values are all over the place (the following images are 20%, 30%, 60%, and 80% accuracy, and all using the same parameters):

-

- To adress this, I need to be able to run a population and get the distribution stats (number of runs, mean, min, max, variance) and add that to the spreadsheet. Started by adding some randomness to the demo data generating function, which should do the trick. I’ll start on the rest tomorrow.

- Yikes! I’m going to try installing the release version of TF. it should be just pip install tensorflow-gpu. Done! Didn’t break anything 🙂