I loaded journal entries from the past 10 years into GPT-3—and started asking it questions – This might be the way to do my next online resume and the book website. Basically, index and summarize, which sounds perfect.

It uses GPT Index, a project consisting of a set of data structures designed to make it easier to use large external knowledge bases with LLMs. Lots of documentation here.

I realize that this approach could replace the finetuning of the GPT-2 models. This makes it extremely general. There could be a book agent, Twitter agent, or even a SBIR agent.

And because it is finding text “chunks” based on embedding similarity to the prompt, it can point back to the sources. That makes research into a subject where the corpora exists much better.

Going to dig into this some more.

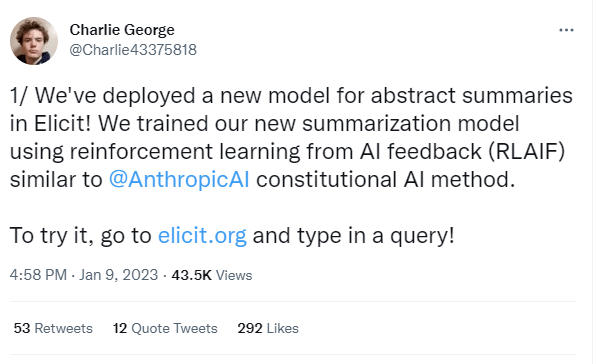

Elicit.org is also doing something like this. Here’s the thread:

This is very good: