June!

This looks quite interesting:

- We introduce Attention Free Transformer (AFT), an efficient variant of Transformers that eliminates the need for dot product self attention. In an AFT layer, the key and value are first combined with a set of learned position biases, the result of which is multiplied with the query in an element-wise fashion. This new operation has a memory complexity linear w.r.t. both the context size and the dimension of features, making it compatible to both large input and model sizes. We also introduce AFT-local and AFT-conv, two model variants that take advantage of the idea of locality and spatial weight sharing while maintaining global connectivity. We conduct extensive experiments on two autoregressive modeling tasks (CIFAR10 and Enwik8) as well as an image recognition task (ImageNet-1K classification). We show that AFT demonstrates competitive performance on all the benchmarks, while providing excellent efficiency at the same time.

SBIR

- More writing. It turns out that the conference that I was aiming for had a (required) early submission for US authors that I missed. Sigh

- Wrote of a description of cloud computing for big science for Eric H

- Worked on 2 proposal overviews of Orest

Book

- Working on conspiracy article/chapter

GPT-Agents,

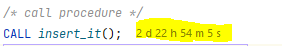

- Still running the statement that I put together Saturday

- 3:00 – ICWSM rehearsal – lots of good comments, which means lots of revisions. Another walkthrough this Friday at 3:30