Cool thing for the day!

- Monitoring social discourse about COVID-19 vaccines is key to understanding how large populations perceive vaccination campaigns. We focus on 4765 unique popular tweets in English or Italian about COVID-19 vaccines between 12/2020 and 03/2021. One popular English tweet was liked up to 495,000 times, stressing how popular tweets affected cognitively massive populations. We investigate both text and multimedia in tweets, building a knowledge graph of syntactic/semantic associations in messages including visual features and indicating how online users framed social discourse mostly around the logistics of vaccine distribution. The English semantic frame of “vaccine” was highly polarised between trust/anticipation (towards the vaccine as a scientific asset saving lives) and anger/sadness (mentioning critical issues with dose administering). Semantic associations with “vaccine,” “hoax” and conspiratorial jargon indicated the persistence of conspiracy theories and vaccines in massively read English posts (absent in Italian messages). The image analysis found that popular tweets with images of people wearing face masks used language lacking the trust and joy found in tweets showing people with no masks, indicating a negative affect attributed to face covering in social discourse. A behavioural analysis revealed a tendency for users to share content eliciting joy, sadness and disgust and to like less sad messages, highlighting an interplay between emotions and content diffusion beyond sentiment. With the AstraZeneca vaccine being suspended in mid March 2021, “Astrazeneca” was associated with trustful language driven by experts, but popular Italian tweets framed “vaccine” by crucially replacing earlier levels of trust with deep sadness. Our results stress how cognitive networks and innovative multimedia processing open new ways for reconstructing online perceptions about vaccines and trust.

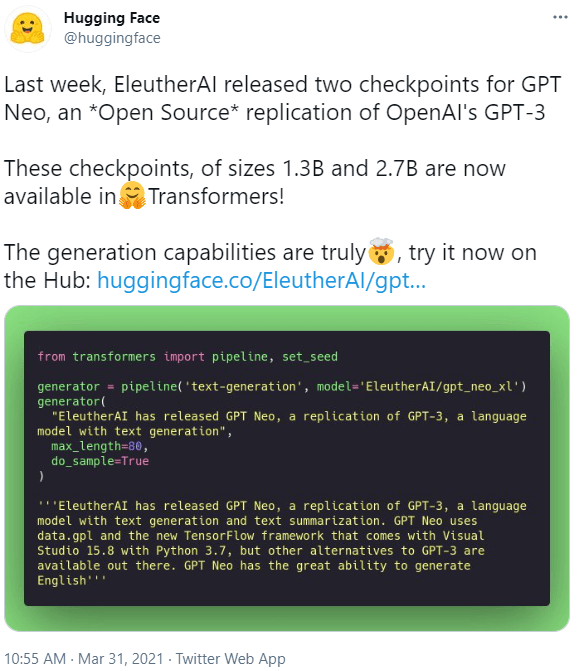

GPT-3 (actually GPT-Neo) is available on Huggingface: huggingface.co/EleutherAI/gpt-neo-1.3B

GPT Agents

- Here’s the new page for how to fintune a language model with the Huggingface API: github.com/huggingface/transformers/tree/master/examples/language-modeling

python run_clm.py \

--model_name_or_path gpt2 \

--train_file path_to_train_file \

--validation_file path_to_validation_file \

--do_train \

--do_eval \

--output_dir /tmp/test-clm

- There is also an API that gives you more control described here.

from transformers import BertForSequenceClassification, Trainer, TrainingArguments

model = BertForSequenceClassification.from_pretrained("bert-large-uncased")

training_args = TrainingArguments(

output_dir='./results', # output directory

num_train_epochs=3, # total # of training epochs

per_device_train_batch_size=16, # batch size per device during training

per_device_eval_batch_size=64, # batch size for evaluation

warmup_steps=500, # number of warmup steps for learning rate scheduler

weight_decay=0.01, # strength of weight decay

logging_dir='./logs', # directory for storing logs

)

trainer = Trainer(

model=model, # the instantiated 🤗 Transformers model to be trained

args=training_args, # training arguments, defined above

train_dataset=train_dataset, # training dataset

eval_dataset=test_dataset # evaluation dataset

)- Got tired of recalculating parts-of-speech, so I added a field to table_output for that and sentiment. currently reprocessing all the tables from Fauci/Trump forward.

- Update the Overleaf doc

- Figuring out what to do with the chess paper with Antonio

SBIR

- MDA Meeting at 10:00