7:00 – 5:00 ASRC GEOS

- Another thought about groups thinking like neurons. Alcohol is like dopamine. It encourages connections between individuals, but the communication can become “noisier”

- Call SSA

- Dissertation/TAAS

- Discuss survey

- GEOS model

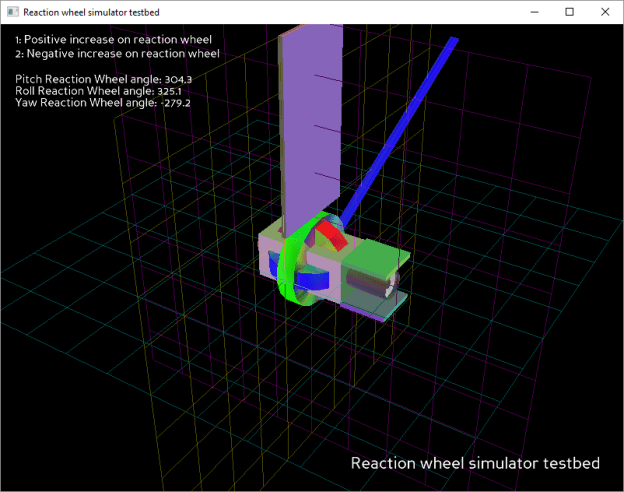

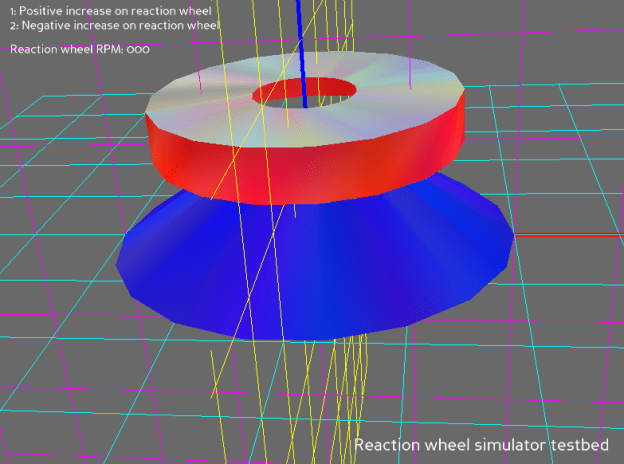

- Finish control/simulation framework

- Get access to the rotation matrix and calculate transformations for (1, 0, 0), (0, 1, 0), and (0, 0, 1). Use those for pitch roll and yaw measurements

- Write out these values (excel) and see if we can do a proof-of-concept that shows prediction of the reaction wheels from the PRY measurements

- Helped Heera with her code

- Read through and edited TAAS

You must be logged in to post a comment.