Command Dysfunction: Minding the Cognitive War (1996)

Author: Arden B. Dahl

Institution: School of Advanced Airpower Studies Air University

Overall

- An analysis of Command and Control Warfare (C2W), which aims to create command dysfunction in the adversary.

- When viewed from an asymetric warfare perspective, this closely resembles the Gerazimov Doctrine

Notes

- Perception and cognition perform distinct roles in the formation of judgment. Perception answers the question: What do I see? Cognition answers the next question: How do I interpret it? However, general perceptual and cognitive biases cause decision makers to deviate from objectivity and make errors of judgment. Perceptual biases occur from the way the human mind senses the environment and tend to limit the accuracy of perception. Cognitive biases result from the way the mind works and tend to hinder accurate interpretation. These biases are general in that they are thought to be normally present in the general population of decision makers-regardless of their cultural background and organizational affiliations. (Page 14)

- This is also true of machine (or any) intelligence that is not omniscient. There are corollaries for group decision processes

- There are three perceptual biases that affect the accuracy of one’s view of the environment: the conditioning of expectations, the resistance to change and the impact of ambiguity. (Page 14)

- There are three primary areas in which cognitive biases degrade the accuracy of judgment within a decision process: (Page 16)

- the attribution of causality,

- the evaluation of probability and

- availability bias is a rule of thumb that works on the ease with which one can remember or recall other similar instances

- anchoring bias is a phenomenon in which decision makers adjust too little from their initial judgments as additional evidence becomes available.

- overconfidence bias is a tendency for individual decision makers to be subjectively overconfident about the extent and accuracy of their knowledge

- Other typical problems in estimating probabilities derive from the misunderstanding of statistics.

- the evaluation of evidence.

- Decision makers tend to value consistent information from a small data set over more variable information from a larger sample.

- Absence of Evidence bias is when decision makers to miss data in complicated problems. Analysts often do not recognize that data is missing and adjust the certainty of their inferences accordingly.

- The Persistence of Impressions bias follows a natural tendency to maintain first impressions concerning causality. It appears that the initial association of evidence to an outcome forms a strong cognitive linkage.

- AI systems can help with these errors of judgement though, since that can be explicitly programmed or placed in the training set.

- These are all contributors to Normal Accidents

- What about incorporating doctrine, rules of engagement and standard operating procedures? These can change dynamically and at different scales. (Allison Model II – Organizational Processes)

- Also, it should be possible to infer the adversaries’ rules and then find areas in the latent space that they do not cover. They will be doing the same to us. How to guard against this? Diversity?

- While the division of labor and SOP specialization is intended to make the organization efficient, the same division generates requirements to coordinate the intelligent collection and analysis of data.41 The failure to coordinate the varied perceptions and interests within the organization can lead to a number of uncoordinated rational decisions at the lower echelons, which in tum lead to an overall irrational outcome. (Page 20)

- There are two common cultural biases that deserve mention for their role in forming erroneous perceptions: arrogance and projection. Arrogance is the attitude of superiority over others or the opposing side. It can manifest as a national or individual perception. In the extreme case, it forgoes any serious search of alternatives or decision analysis beyond what the decision maker has already decided. It can become highly irrational. The projection bias sees the rest of the world through one’s own values and beliefs, thus tending to estimate the opposition’s intentions, motivations and capabilities as one’s own. (Page 21)

- Again, a good case for well-designed AI/ML. That being said, a commander’s misaligned biases may discount the AI system

- The overconfidence or hubris bias tends toward an overreaching inflation of one’s abilities and strengths. In the extreme it promotes a prideful self-confidence that is self-intoxicating and oblivious to rational limits. A decision maker affected with hubris will in his utter aggressiveness invariably be led to surprise and eventual downfall, The Hubris-Nemesis Complex is dangerous mindset that combines hubris (self intoxicating “pretension to godliness”) and nemesis (“vengeful desire” to wreak havoc and destroy). Leaders possessing this bias combination are not easily deterred or compelled by normal or rational solutions (Page 22)

- Three major decision stress areas include the consequential weight of the decision, uncertainty and the pressure of time (Page 23)

- Crisis settings complicate the use of rational and analytical decision processes in two ways. First, they add numerous unknowns, which in tum create many possible alternatives to the decision problem. Second, they reduce the time available to process and evaluate data, choose a course of action, and execute it.

- As uncertainty becomes severe, decision makers begin resorting to maladaptive search and evaluation methods to reach conclusions. Part of this may stem from a desire to avoid the anxiety of being unsure, an intolerance of ambiguity. It may also be that analytical approaches are difficult when the link between the data and the outcomes is not predictable

- Still true for ML systems, even without stress. Being forced to make shorter searches of the solution space (not letting the results converge, etc. could be an issue)

- The logic of dealing with the time pressure normally follows a somewhat standard pattern. Increasing time pressure first leads to an acceleration of information processing. Decision makers and their organizations will pick up the pace by expending additional resources to maintain existing decision strategies. As the pace begins to outrun in-place processing capabilities, decision makers reduce their data search and processing. In some cases this translates to increased selectivity, which the decision maker biases or weights toward details considered more important. In other cases, it does not change data collection but leads to a shallower data analysis. As the pace continues to increase, decision strategies begin to change. At this point major problems can creep into the process. The problems result from maladaptive strategies (satisficing, analogies, etc.) that save time but misrepresent data to produce inappropriate solutions. The lack of time also prevents critical introspection for perceptual and cognitive biases. In severe time pressure cases, the process may deteriorate to avoidance, denial or panic. (Page 26)

- The goal is to create this in the adversary, but not us. Which makes this in many respects a algorithm efficiency / processing power arms race

- In some decision situations, a timely, relatively correct response is better than an absolutely correct response that is made too late. In other words, the situation generates a tension between analysis and speed. (Page 30)

- The Recognition-Primed Decision (RPD) process works in the following manner. First, an experienced decision maker recognizes a problem situation as familiar or prototypical. The recognition brings with it a solution. The recognition also evokes an appreciation for what additional information to monitor: plausible outcomes, typical reactions, timing cues and causal dynamics. Second, given time, the decision maker evaluates his solution for suitability by testing it through mental simulation for pitfalls and needed adjustments. Normally, the decision maker implements the first solution “on the run” and makes adjustments as required. The decision maker will not be discard a solution unless it becomes plain that it is unworkable. If so, he will attempt a second option, if available. The RPD process is one of satisficing. It assumes that experienced decision makers identify a first solution that is “reasonably good” and are capable of mentally projecting its implementation. The RPD process also assumes that experienced decision makers are able to implement their one solution at any time during the process. (Page 31)

- It should be possible to train systems to approximate this, possibly at different levels of abstraction

- The RPD is a descriptive model that explains how experienced decision makers work problems in high stress decision situations (Page 31)

- It is reflexive, and as such well suited to ML techniques, assuming there is enough data…

- Situations that require the careful deployment of resources and analysis of abstract data, such as anticipating an enemy’s course of action, require an analytical approach. If there is time for analysis, a rational process normally provides a better solution for these kinds of problems (page 31)

- This is not what AI/ML is good at. As reaction requirements become tighter, these actions will have to be run in “slow motion” offline and used to train the system.

- The RPD model provides some insight as to how operational commanders survive in high-load, ambiguous and time pressured situations. The key seems to be experience. The experience serves as the base for what may be seen as an intuitive way to overcome stress. (Page 32)

- This is why training with attribution may be the best way. “Ms. XXX trained this system and I trust her” may be the best option. We may want to build a “stable” of machine trainers.

- Decision makers with more experience will tend to employ intuitive methods more often than analytical processes. This reliance on pattern recognition among experienced commanders may provide an opportunity for an adversary to manipulate the patterns to his advantage in deception operations. (Page 32)

Chapter 3: Considering a Cognitive Warfare Framework

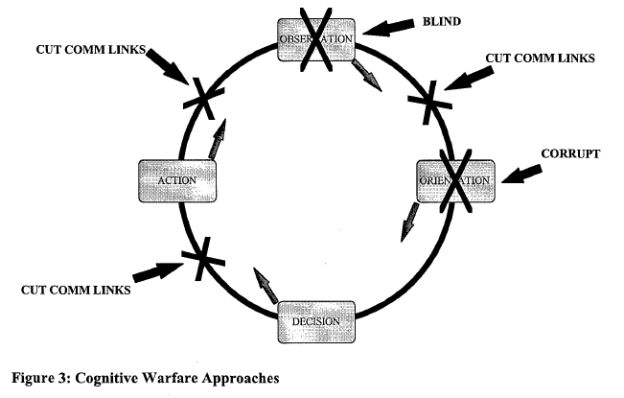

- …an examination of John Boyd’s Observation-Orientation-Decision-Action (OODA) cycle to illustrate the different ways a C2W campaign may attack an adversary’s decision cycle. This sets the stage for analysis of the particular methods of such attacks.

- From Wikipedia: One of John Boyd’s primary insights in fighter combat was that it is vital to change speed and direction faster than the opponent. This may interfere with an opponent’s OODA cycle. It is not necessarily a function of the plane’s ability to maneuver, but the pilot must think and act faster than the opponent can think and act. Getting “inside” the cycle, short-circuiting the opponent’s thinking processes, produces opportunities for the opponent to react inappropriately.

- Once a group is adapting as fast as its arousal potential can tolerate, it will react in a linear way, since any deviation from the plan creates more arousal potential. Creating these stampedes, often simply through adversarial herding can create an extremely brittle vulnerable C2W cognitive framework.

- …degrading the efficiency of the decision cycle by denying the “observation” function the ability to see and impeding the flow of accurate information through the physical links of the loop. Data denial is usually achieved by preventing the adversary’s observation function, or sensors, from operating effectively in one or more channels. (Page 36)

- The second approach attempts to corrupt the adversary’s orientation. The focus is on the accuracy of the opponent’s perceptions and facts that inform his decisions, rather than their speed through the decision cycle. Operations security, deception and psychological operations (PSYOPS) are usually the primary C2W elements in the corruption effort.72 The corruption scheme’s relationship to decision speed is somewhat complicated. In fact, the corruption mechanism may work to vary the decision speed depending on the objective of the intended misperception. For example, the enemy might be induced to speedily make the wrong decision. (Page 37)

- B. H. Liddell Hart wrote: “…it is usually necessary for the dislocating move to be proceeded by a move, or moves, which can be best defined by the term ‘distract’ in its literal sense of ‘to draw asunder’. The purpose of this ‘distraction’ is to deprive the enemy of his freedom of action, and it should operate in the physical and psychological spheres.” (Page 42)

- The issue here is that we are adding a new “psychological” sphere – the domain of the intelligent machine. Since we have limited insight into the high-dimensional function that is the trained network, we cannot know when it is being successfully manipulated until it makes an obvious blunder, at which point it may be too late. This is one of the reasons that diversity needs to be built into the system so that there is a lower chance of a majority of systems being compromised.

- Fundamentally, all deception ploys are constructed in two parts: dissimulation and simulation. Dissimulation is covert, the act of hiding or obscuring the real; its companion, simulation, presents the false. Within this basic construct, deception programs are employed in two variants: A-type (ambiguity) and M-type (misdirection). The A-type deception seeks to increase ambiguity in the target’s mind. Its aim is to keep the adversary unsure of one’s true intentions, especially an adversary who has initially guessed right. A number of alternatives are developed for the target’s consumption, built on lies that are both plausible and sufficiently significant to cause the target to expend resources to cover them. The M-type deception is the more demanding variant. This deception misleads the adversary by reducing ambiguity, that is, attempting to convince him that the wrong solution is, in fact, “right.” In this case, the target positions most of his attention and resources in the wrong place. (Page 44)

- Both Sun-Tzu and Liddell Hart highlighted the dilemma of alternative objectives upon an adversary’s mind made possible by movement. (Page 45)

- Most important is the fact that the overall cognitive warfare approach is dependent upon the enemy’s command baseline–the decision making processes, command characteristics and expectations of the decision makers. The skillful employment of stress and deception against the command baseline may be a principal mechanism to bring about its cognitive dislocation. (Page 50)

- As the baseline becomes automated, then cognitive warfare must factor these aspects in.