7:00 – ASRC TL

- Fast editor for very large files: EmEditor

- Topic Modeling Systems and Interfaces

- The 4Humanities “WhatEvery1Says” project conducted a comparative analysis in 2016 of the following topic modeling systems/interfaces. As a result, it chose to implement Andrew Goldstone’s DFR-browser for its own work.

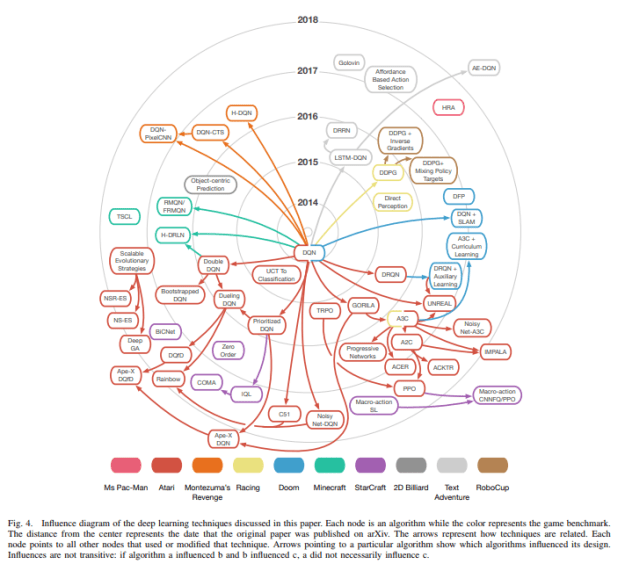

- Deep Learning for Video Game Playing

- In this article, we review recent Deep Learning advances in the context of how they have been applied to play different types of video games such as first-person shooters, arcade games, and real-time strategy games. We analyze the unique requirements that different game genres pose to a deep learning system and highlight important open challenges in the context of applying these machine learning methods to video games, such as general game playing, dealing with extremely large decision spaces and sparse rewards.

- Wide Neural Networks of Any Depth Evolve as Linear Models Under Gradient Descent

- A longstanding goal in deep learning research has been to precisely characterize training and generalization. However, the often complex loss landscapes of neural networks have made a theory of learning dynamics elusive. In this work, we show that for wide neural networks the learning dynamics simplify considerably and that, in the infinite width limit, they are governed by a linear model obtained from the first-order Taylor expansion of the network around its initial parameters. Furthermore, mirroring the correspondence between wide Bayesian neural networks and Gaussian processes, gradient-based training of wide neural networks with a squared loss produces test set predictions drawn from a Gaussian process with a particular compositional kernel. While these theoretical results are only exact in the infinite width limit, we nevertheless find excellent empirical agreement between the predictions of the original network and those of the linearized version even for finite practically-sized networks. This agreement is robust across different architectures, optimization methods, and loss functions.

- AI Safety Needs Social Scientists

- We believe the AI safety community needs to invest research effort in the human side of AI alignment. Many of the uncertainties involved are empirical, and can only be answered by experiment. They relate to the psychology of human rationality, emotion, and biases. Critically, we believe investigations into how people interact with AI alignment algorithms should not be held back by the limitations of existing machine learning. Current AI safety research is often limited to simple tasks in video games, robotics, or gridworlds, but problems on the human side may only appear in more realistic scenarios such as natural language discussion of value-laden questions. This is particularly important since many aspects of AI alignment change as ML systems increase in capability.

- Started on slides for Thursday

- Working on white paper. Adding in the paper above on Deep Learning for Video Game Playing.