7:00 – 4:00 ASRC PhD/NASA

- BBC Business Daily on making decisions under uncertainty. In particular, David Tuckett (Scholar), professor and director of the Centre for the Study of Decision-Making Uncertainty at University College London talks about how we reduce our sense of uncertainty by telling ourselves stories that we can then align with. This reminds me of how conspiracy theories develop, in particular the remarkable storyline of QAnon.

- Uncertain Futures: Imaginaries, Narratives, and Calculation in the Economy

- Got a sample and ordered from ILL. It’s $50, which is a bit much for an impulse buy

- Uncertain Futures: Imaginaries, Narratives, and Calculation in the Economy

- More Normal Accident review

- NYTimes on frictionless design being a problem

- Dungeon processing – broke out three workbooks for queries with all players, no dm, and just the dm. Also need to write up some code that generates the story on html.

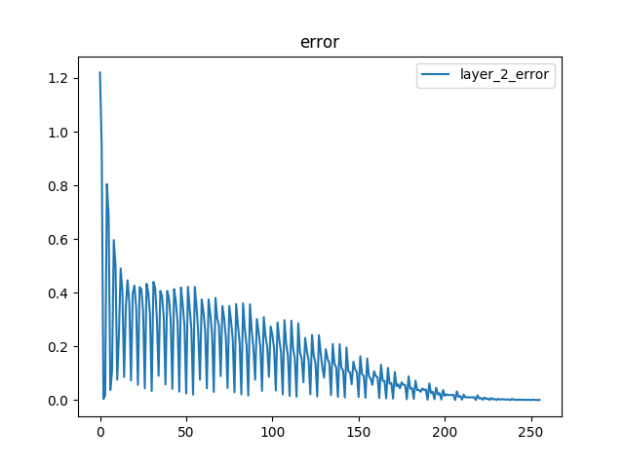

- Backprop debugging. I think it works?

- Here’s the core of the forward (train) and backpropagation (learn) code:

def train(self): if self.source != None: src = self.source self.neuron_row_array = np.dot(src.neuron_row_array, src.weight_row_mat) if(self.target != None): # No activation function to output layer self.neuron_row_array = relu(self.neuron_row_array) # TODO: use passed-in activation function self.neuron_col_array = self.neuron_row_array.T def learn(self, alpha): if self.source != None: src = self.source delta_scalar = np.dot(self.delta, src.weight_col_mat) delta_threshold = relu2deriv(src.neuron_row_array) # TODO: use passed in derivative function src.delta = delta_scalar * delta_threshold mat = np.dot(src.neuron_col_array, self.delta) src.weight_row_mat += alpha * mat src.weight_col_mat = src.weight_row_mat.T - And here’s the evaluation:

--------------evaluation input: [[1. 0. 1.]] = pred: 0.983 vs. actual:[1] input: [[0. 1. 1.]] = pred: 0.967 vs. actual:[1] input: [[0. 0. 1.]] = pred: -0.020 vs. actual:[0] input: [[1. 1. 1.]] = pred: 0.000 vs. actual:[0]