Wikipedia is 25 years old today! I support them with a monthly contribution, and you should too if you can afford it. Or get some fun anniversary merch

Olivier Simard-Casanova: I am a a French economist. I study how humans influence each other in organizations, especially in the workplace, in scientific communities, and on the Internet. More specifically, I am interested in personnel and organizational economics, in the interaction of monetary and non-monetary incentives in the workplace, in the diffusion of opinion, in network theory, in automated text processing, and in the meta-science of economics. I am also interested in making science more open.

Google DeepMind is thrilled to invite you to the Gemini 3 global hackathon. We are pushing the boundaries of what AI can do by enhancing reasoning capabilities, unlocking multimodal experiences and reducing latency. Now, we want to see what you can create with our most capable and intelligent model family to date.

Tasks

- Need to send a preliminary response to ACM books (done), then work on the reviewer responses. In particular, I think I need a forward for each story that sets up the nature of the attack.

- Groceries – nope

- Bank stuff? Probably better tomorrow after the showing

SBIRs

- 9:00 standup – done

- 4:00 ADS – very relaxed meeting

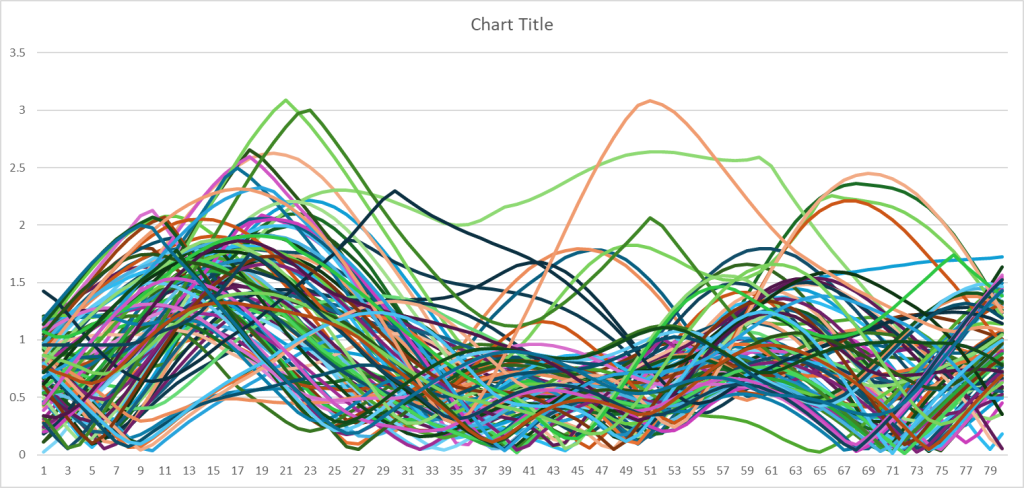

- UMAP will only work with the 100k set

- 3D Umap with the 100k set

- Go through the pkl files and add the 2D and 3D embeddings – got the code running, will kick it off tomorrow

- While iterating over the pkl files, create a new table for 2D data that can be used to train the clusterer. Same reservoir technique. I think I can just use the existing code, so I think I’ll do that instead.

- More Linux box set up – nope, just coding

- Security things! Copied files over

You must be logged in to post a comment.