Dentist

Ping Tim, Dave

SBIRs

- Talk went well yesterday. The White Hat AI seems to be reasonable. Need to put that on the poster

- White paper. Start to fill in the stuff that I remember the best

GPT Agents

- 3:00 Meeting with Alden

Dentist

Ping Tim, Dave

SBIRs

GPT Agents

Started the day off on the wrong foot by dropping my breakfast. Grumble

SBIRs

GPT Agents

Tasks

This looks interesting for building maps? Manifold Diffusion Fields

SBIRs

Some links on the relative environmental impact of computing:

AI is like a very tiny hamburger

The Staggering Ecological Impacts of Computation and the Cloud

Submitted the HAI-GEN paper!

Got rejected on the SIGCH late-breaking. Put the RAG paper up on ArXiv. Should be live Monday.

Undermining Ukraine: How Russia widened its global information war in 2023

Going to work on the slides for the NIST talk – good progress

Hi Feb 29! See you again in 4 years!

SBIRs

GPT Agents

Can the “hallucination” / invention / lying problem be fixed? No. These are systems of prediction. Predictions made from insufficient data will always be random. The problem is that the same thing that makes them really useful (that they are learning about culture e.g. language at many different levels) also ensures that they are deeply inhuman – there is no way to tell from the syntax or tone of a sentence how correct the model is. Nothing in modelling performed this way retains information about how much data underlies the predictions.

SBIRs

GPT Agents

Lots of good stuff from Nature this morning:

SBIRs

GPT Agents

Generative AI’s environmental costs are soaring — and mostly secret

SBIRs

GPT Agents

Asked for the quote on the house!

Chores

2:00 counseling

SBIRs

GPT Agents

Human and nonhuman norms: a dimensional framework

SBIRs

GPT Agents

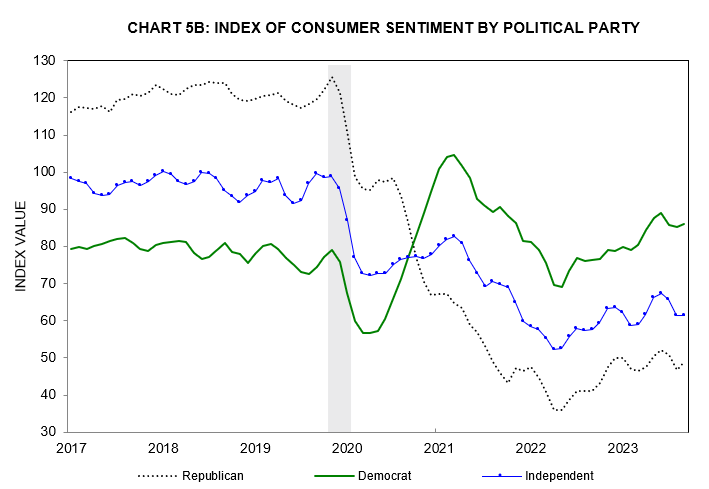

The original data from Paul Krugman’s opinion piece today

SBIRs

GPT Agents

SBIRs

Stitches out at 4:00!

SBIRs

“I’m not that interested in like the Killer Robots walking down the street direction of things going wrong I much more interested in the like very subtle societal misalignments where we just have these systems out in society and through no particular ill intention um… things just go horribly wrong” – Sam Altman, at the World Government Summit Feb 13, 2024

You must be logged in to post a comment.