New AI-powered anti-scam tool wins praise from UK fraud minister | Scams | The Guardian

- Scam Intelligence lets customers of the digital bank Starling upload images of items and ads on online marketplaces such as Facebook Marketplace, eBay, Vinted and Etsy, which it analyses for signs of fraud before serving up personalised advice “in seconds”.

- Scam Intelligence was built using Gemini, Google’s AI chatbot, in collaboration with Google Cloud, and was due to be unveiled at a fintech event in Las Vegas on Monday.

- During testing it increased the rate at which customers cancelled payments by 300% – suggesting it has encouraged customers to pause and reflect before making a purchase.

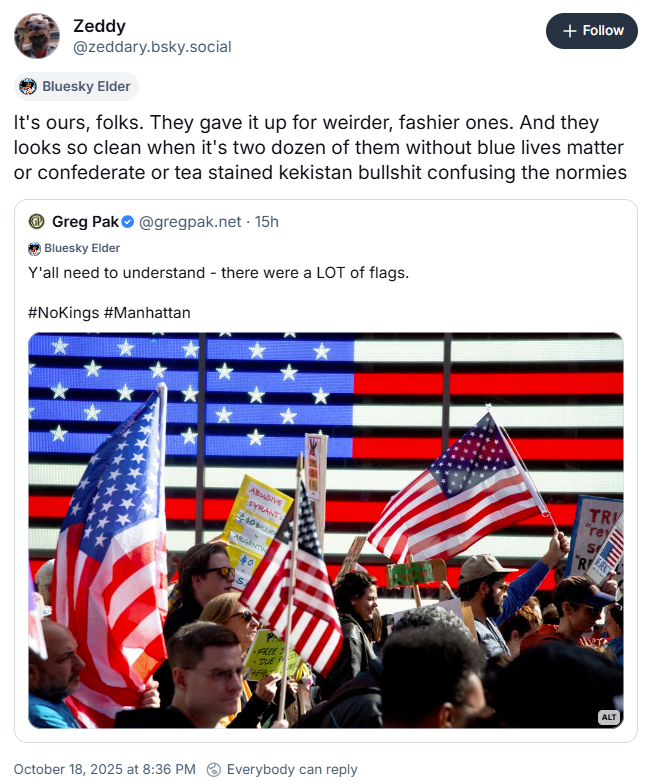

- BlueSky replies are interesting and essentially anti-AI. Public adoption of these kinds of technology could be very complicated

MiniMax-M2 redefines efficiency for agents. It’s a compact, fast, and cost-effective MoE model (230 billion total parameters with 10 billion active parameters) built for elite performance in coding and agentic tasks, all while maintaining powerful general intelligence. With just 10 billion activated parameters, MiniMax-M2 provides the sophisticated, end-to-end tool use performance expected from today’s leading models, but in a streamlined form factor that makes deployment and scaling easier than ever.

Tasks

- Window cleaners – need to take some pix

- Bank – done. Need some more paperwork

- Send Philipp a quick note – done

SBIRs

- 9:00 Standup – done

- 2:00 IRAD – done

- 3:00 Sprint planning – done

LLMs

- Outline Grok article – got a rough first pass, and learned the origins of Baal, which I thought was this!

You must be logged in to post a comment.