Saw this today:

Via BlueSky

Really good summary of the AMOC tipping point:

Played around with NotebookLM on synthesizing the SCOTUS ruling with other sources. It works pretty well! I’ll edit and post later

SBIRs

Tasks

SBIRs

Back from the MORS 92ns Symposium. Monterey is lovely. I got to ride my bike along the shore and into the hills. Good presentations. Some particularly good stuff from Sandia on finding markers for when online activity moves into the real world. In this case the data was about the GameStop short squeeze, but it might be more generalizable. Need to keep in touch.

Another, less interesting talk had a really good pointer, MITRE’s Att&ck knowledge base of adversary tactics and techniques based on real-world observations. I think it makes sense to start to put together a AI-based social hacking of theoretical and actual possible hacks and defenses. Some of these would still be human active measures, but could be scaled.

These are some loooooong daylight hours here near the 39th parallel.

SBIRs

from dash import Dash, dcc, html, Input, Output, callback

import plotly.express as px

from NNMs.utils.DashBaseClass import DashBaseClass

import numpy as np

from sklearn.preprocessing import StandardScaler

import pandas as pd

import umap

class UmapPenguins(DashBaseClass):

df:pd.DataFrame

def initialize(self) -> None:

penguins = pd.read_csv("https://raw.githubusercontent.com/allisonhorst/palmerpenguins/c19a904462482430170bfe2c718775ddb7dbb885/inst/extdata/penguins.csv")

penguins.head()

penguins = penguins.dropna()

print(penguins.species.value_counts())

print("scaling data")

reducer = umap.UMAP()

penguin_data = penguins[

[

"bill_length_mm",

"bill_depth_mm",

"flipper_length_mm",

"body_mass_g",

]

].values

scaled_penguin_data = StandardScaler().fit_transform(penguin_data)

print("finished scaling data")

print("calculating embedding")

embedding = reducer.fit_transform(scaled_penguin_data)

print("embedding.shape = {}".format(embedding.shape))

self.df = pd.DataFrame(embedding, columns=['x', 'y'])

# nda = np.random.random(size=(333, 3))

# self.df = pd.DataFrame(nda, columns=['x', 'y', 's'])

def setup_layout(self) -> None:

self.add_div(html.H2("UMAP scatterplot", style={'textAlign': 'center'}))

fig = px.scatter(self.df, x='x', y='y')

self.add_div(dcc.Graph(figure=fig))

self.app.layout = html.Div(self.div_list)

if __name__ == "__main__":

ump = UmapPenguins(True)

The Google Doodle has a nice clickthrough for Juneteenth. Think I’ll go for a nice bike ride.

Tasks:

Interesting piece from Bobbie Berjon: The Public Interest Internet

Tasks

SBIRs

Tasks

Creativity Has Left the Chat: The Price of Debiasing Language Models

SBIRs

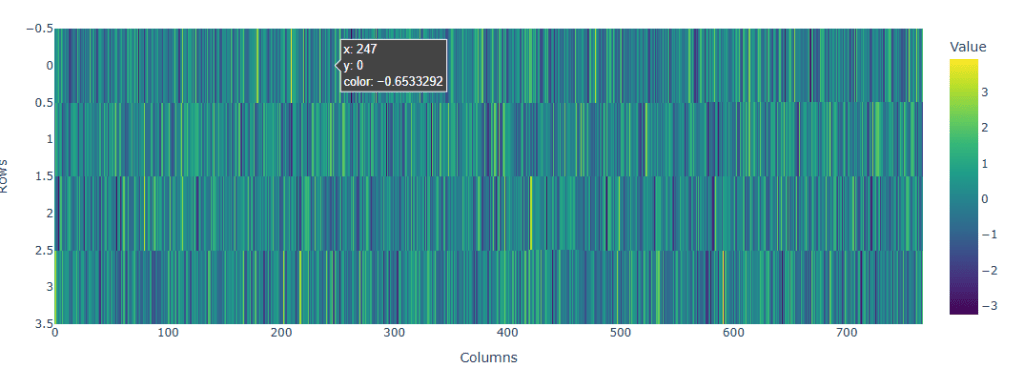

Got the heatmap working. Calling it a day

Finally able to get to chores

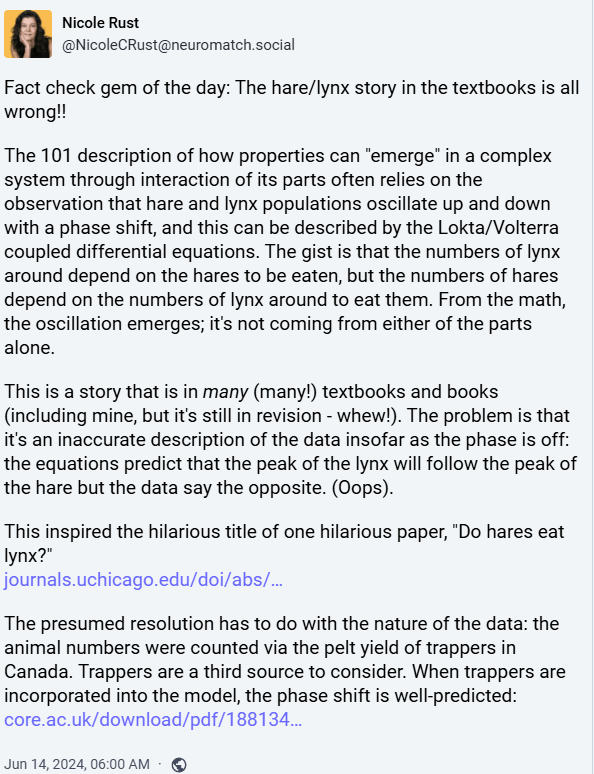

Via Mastodon

Pentagon ran secret anti-vax campaign to undermine China during pandemic

SBIRs

I’ve been wondering about how to map regions of a model that are reached through an extensive prompt, like the kind you see with RAG. The problem is that the prompt gets very large, and it may be difficult to see how the trajectory works. There appear to be several approaches to dealing with this, so here’s an ongoing list of things to try:

SBIRs

GPT Agents

Well, the vacation is fading, and I’m back to what I’ve been calling “lockdown lite.” Going from a steady interaction with people in the real world to work-from-home where my interaction with people is a few online meetings… isn’t healthy.

SBIRs

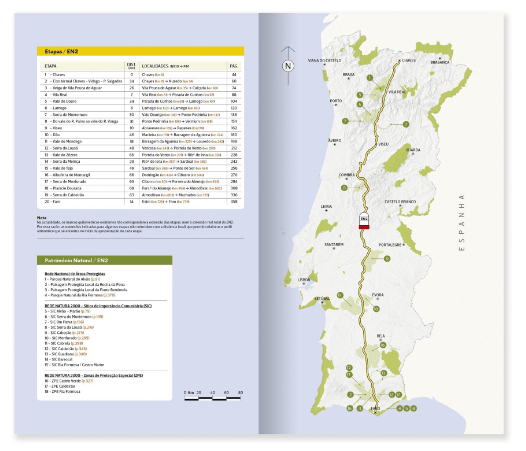

PORTUGAL FROM NORTH TO SOUTH ALONG THE MYTHICAL ESTRADA NACIONAL 2 – 5TH EDITION <- ordered!

Tasks:

SBIRs

GPT-Agents

Back from a great vacation in Portugal. We did non-touristy things too, but the National Palace of Pena in Sintra is an amazing 19th century Disneyland:

Here’s where it is: maps.app.goo.gl/11pEQ8TunMRW69qx6

And back to work:

SBIRs

You must be logged in to post a comment.