For the Profs&Pints, I think I’m going to bookend the talk wiith a reading of Organizational Lobotomy at the beginning and War Room at the end. Need to figure out what the slides should be.

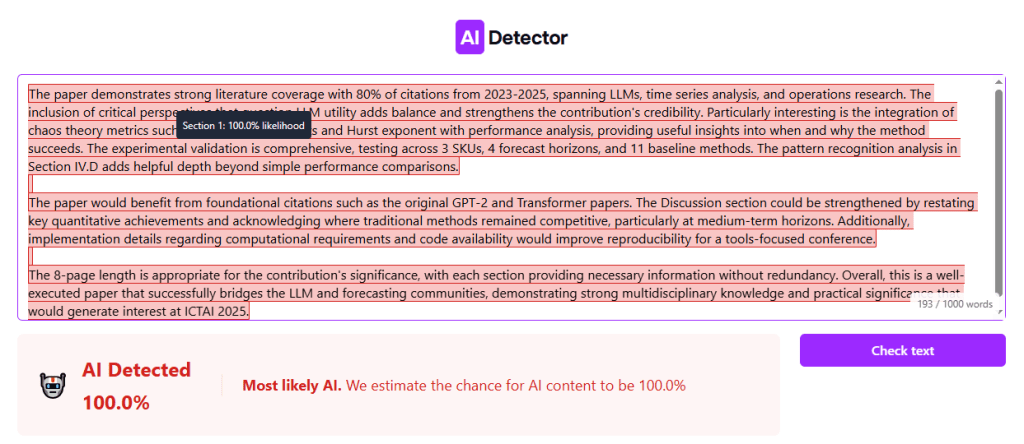

No, AI is not Making Engineers 10x as Productive

- I think a lot of the more genuine 10x AI hype is coming from people who are simply in the honeymoon phase or haven’t sat down to actually consider what 10x improvement means mathematically. I wouldn’t be surprised to learn AI helps many engineers do certain tasks 20-50% faster, but the nature of software bottlenecks mean this doesn’t translate to a 20% productivity increase and certainly not a 10x increase.

The Ordinal Society

- As members of this society embrace ranking and measurement in their daily lives, new forms of social competition and moral judgment arise. Familiar structures of social advantage are recycled into measures of merit that produce insidious kinds of social inequality. While we obsess over order and difference—and the logic of ordinality digs deeper into our behaviors, bodies, and minds—what will hold us together? Fourcade and Healy warn that, even though algorithms and systems of rationalized calculation have inspired backlash, they are also appealing in ways that make them hard to relinquish.

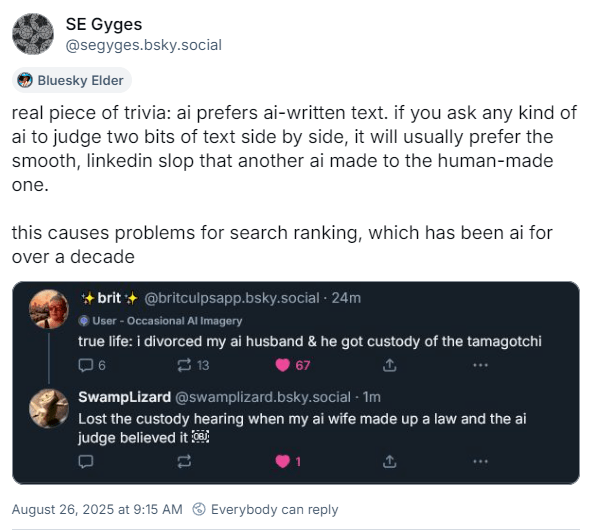

Chatbots Can Go Into a Delusional Spiral. Here’s How It Happens.

- “The story line is building all the time,” Ms. Toner said. “At that point in the story, the whole vibe is: This is a groundbreaking, earth-shattering, transcendental new kind of math. And it would be pretty lame if the answer was, ‘You need to take a break and get some sleep and talk to a friend.’”

Tasks

- Send the updates back to Vanessa – done

- Send email about LLC to PPL

- Look for trade nonfiction agents – started

- Dishes – done

- Bills – done

- Chores – done

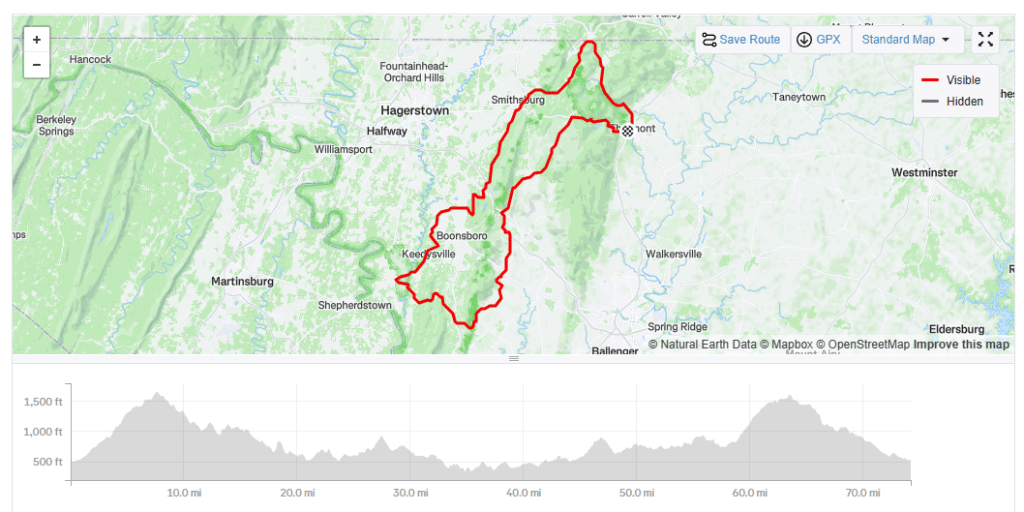

- Ride to Brookville for 1:00 lunch – leave at 11:00! – done! Fun!

- Read paper 599 – started

You must be logged in to post a comment.